基础集群环境搭建

k8s基础集群环境主要是运行kubernetes管理端服务以及node节点上的服务部署及使用。目前最新版1.15+,但可能还不稳定,此次使用kuber-1.13.5部署。

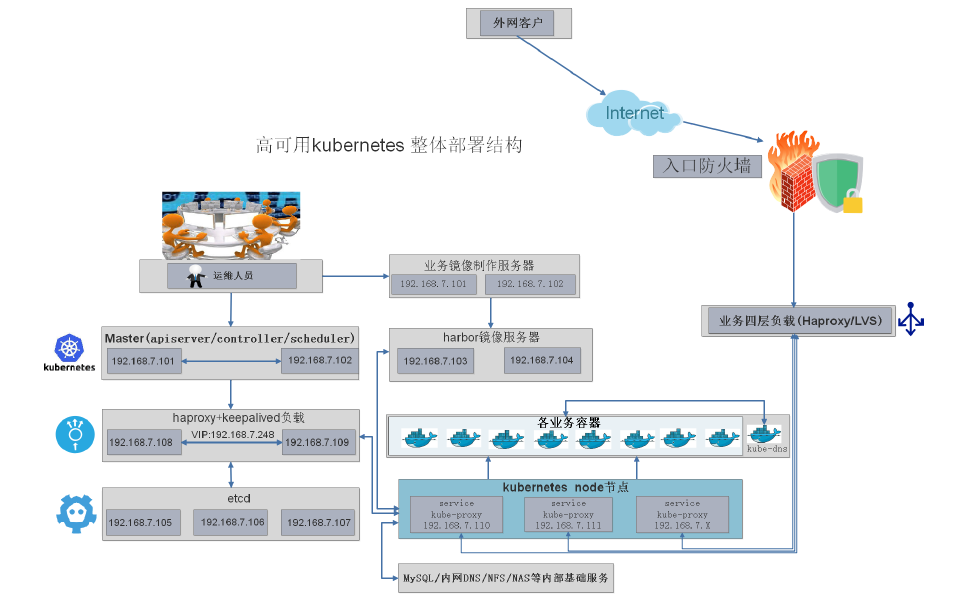

Kubernetes设计架构:

部署单master参看:

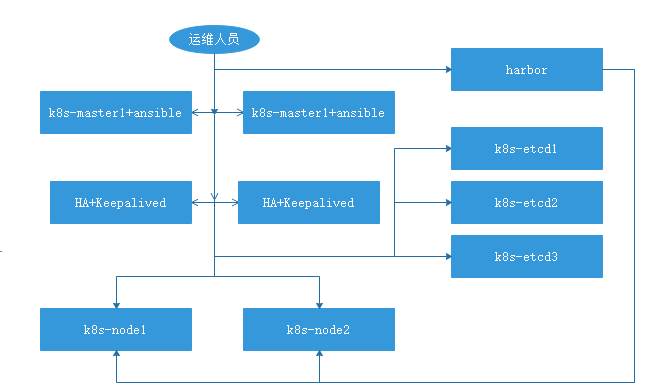

部署多master:

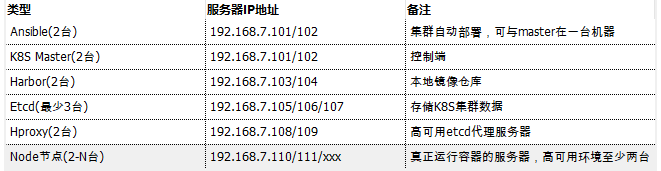

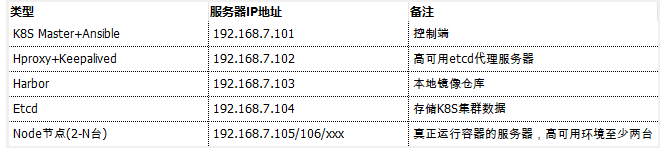

服务器统计信息:

高可用版:

简版

软件清单:

API端口:

端口:192.168.7.248:6443 #需要配置在负载均衡上实现反向代理,dashboard的端口为8443

操作系统:ubuntu server 1804-01

k8s版本: 1.13.5

calico:3.4.4

基础环境准备:

配置Keepalived和HAProxy负载均衡:

# apt-get install haproxy keepalived -y

# vim /etc/keepalived/keepalived.conf 此文件需拷贝/usr/share/doc/keepalived/samples/keepalived.conf.sample

vrrp_instance VI_1 {

state MASTER

interface eth1

virtual_router_id 50

#nopreempt

priority 100

advert_int 1

virtual_ipaddress {

192.168.7.248 dev eth1 label eth1:0

}

}

# vim /etc/haproxy/haproxy.cfg

listen stats

mode http

bind 0.0.0.0:9999

stats enable

log global

stats uri /haproxy-status

stats auth haadmin:123456

listen k8s_api_server_6443

bind 192.168.7.248:6443

mode tcp

log global

# balance source

server 192.168.7.101 192.168.7.101:6443 check inter 3000 fall 2 rise 5

Harbor之https:

参看博文:

参看博文:

可直接将CA证书给harbor,即harbor自签CA。无需使用自签CA再给harbor签发证书。

如下签发,直接将证书给harbor使用:

# openssl genrsa -out /usr/local/src/harbor/certs/harbor-ca.key #生成私有key

# openssl req -x509 -new -nodes -key /usr/local/src/harbor/certs/harbor-ca.key -

subj "/CN=harbor.martinhe.com" -days 7120 -out /usr/local/src/harbor/certs/harborca.

crt #签证

ansible部署:

使用Ansible脚本安装K8S集群,介绍组件交互原理,方便直接,不受国内网络环境影响

https://github.com/easzlab/kubeasz

基础环境准备(在master主机上):

# apt-get install git python2.7 -y #由于使用的ansible文件基于python2.7编写

#需要使用ansible部署的节点,都要安装

# ln -s /usr/bin/python2.7 /usr/bin/python

# apt-get install ansible -y

# ssh-keygen

Generating public/private rsa key pair.

Enter file in which to save the key (/root/.ssh/id_rsa):

Enter passphrase (empty for no passphrase):

Enter same passphrase again:

Your identification has been saved in /root/.ssh/id_rsa.

Your public key has been saved in /root/.ssh/id_rsa.pub.

The key fingerprint is:

SHA256:lMjzANt2DXdc0sOtroTFbUdDVv3UO394nxVbe6gH6CA root@k8s-master.martinhe.com

The key's randomart image is:

+---[RSA 2048]----+

| . . ..o+o+=|

| = . = ...=.*|

| . B + .. . =+|

| . * o +++|

| S + o +B|

| E . o o +o*|

| . o . + .*|

| . o ...|

| . |

+----[SHA256]-----+

# apt-get install sshpass #ssh同步公钥

#分发公钥脚本:

#!/bin/bash

#目标主机列表

IP="

192.168.7.101

192.168.7.102

192.168.7.103

192.168.7.104

192.168.7.105

192.168.7.106

"

for node in ${IP};do

sshpass -p 920410 ssh-copy-id ${node} -o StrictHostKeyChecking=no

if [ $? -eq 0 ];then

echo "${node} 秘钥copy完成"

else

echo "${node} 秘钥copy失败"

fi

done

#同步docker证书脚本:

#!/bin/bash

#目标主机列表

IP="

192.168.7.101

192.168.7.102

192.168.7.103

192.168.7.104

192.168.7.105

192.168.7.106

"

for node in ${IP};do

sshpass -p 920410 ssh-copy-id ${node} -o StrictHostKeyChecking=no

if [ $? -eq 0 ];then

echo "${node} 秘钥copy完成"

echo "${node} 秘钥copy完成,准备环境初始化....."

ssh ${node} "mkdir /etc/docker/certs.d/harbor.martinhe.com -p"

echo "Harbor 证书目录创建成功!"

scp /etc/docker/certs.d/harbor.martinhe.com/martinhe.com.crt ${node}:/etc/docker/certs.d/harbor.martinhe.com/martinhe.com.crt

echo "Harbor 证书拷贝成功!"

scp /etc/hosts ${node}:/etc/hosts

echo "host 文件拷贝完成"

scp -r /root/.docker ${node}:/root/

echo "Harbor 认证文件拷贝完成!"

scp -r /etc/resolv.conf ${node}:/etc/

else

echo "${node} 秘钥copy失败"

fi

done

#执行脚本同步:

# bash cp-key.sh

# vim ~/.vimrc #取消vim 自动缩进功能

clone项目:

为什么使用0.6.1,因为此版本里的控制端和node节点可自动添加。新版本待研究。

# git clone -b 0.6.1 https://github.com/easzlab/kubeasz.git

# mv /etc/ansible/* /opt/

# mv kubeasz/* /etc/ansible/

# cd /etc/ansible/

# cp example/hosts.m-masters.example ./hosts

准备hosts文件:

[Thu Jul 11 12:50

root@k8s-master /etc/ansible]#pwd

/etc/ansible

# cat hosts

# 集群部署节点:一般为运行ansible 脚本的节点

# 变量 NTP_ENABLED (=yes/no) 设置集群是否安装 chrony 时间同步

[deploy]

192.168.7.101 NTP_ENABLED=no

# etcd集群请提供如下NODE_NAME,注意etcd集群必须是1,3,5,7...奇数个节点

[etcd]

192.168.7.104 NODE_NAME=etcd1

#192.168.1.2 NODE_NAME=etcd2

#192.168.1.3 NODE_NAME=etcd3

[new-etcd] # 预留组,后续添加etcd节点使用

#192.168.1.x NODE_NAME=etcdx

[kube-master]

192.168.7.101

#192.168.1.2

[new-master] # 预留组,后续添加master节点使用

#192.168.1.5

[kube-node]

192.168.7.105

#192.168.1.4

[new-node] # 预留组,后续添加node节点使用

#192.168.1.xx

# 参数 NEW_INSTALL:yes表示新建,no表示使用已有harbor服务器

# 如果不使用域名,可以设置 HARBOR_DOMAIN=""

[harbor]

#192.168.1.8 HARBOR_DOMAIN="harbor.yourdomain.com" NEW_INSTALL=no

# 负载均衡(目前已支持多于2节点,一般2节点就够了) 安装 haproxy+keepalived

[lb]

#192.168.1.1 LB_ROLE=backup

#192.168.1.2 LB_ROLE=master

#【可选】外部负载均衡,用于自有环境负载转发 NodePort 暴露的服务等

[ex-lb]

#192.168.1.6 LB_ROLE=backup EX_VIP=192.168.1.250

#192.168.1.7 LB_ROLE=master EX_VIP=192.168.1.250

[all:vars]

# ---------集群主要参数---------------

#集群部署模式:allinone, single-master, multi-master

DEPLOY_MODE=multi-master #多主模式

#集群主版本号,目前支持: v1.8, v1.9, v1.10,v1.11, v1.12, v1.13

K8S_VER="v1.13" #使用v1.13版本

# 集群 MASTER IP即 LB节点VIP地址,为区别与默认apiserver端口,设置VIP监听的服务端口8443

# 公有云上请使用云负载均衡内网地址和监听端口

MASTER_IP="192.168.7.248" #lb vip

KUBE_APISERVER="https://{{ MASTER_IP }}:6443" #修改为APIserver端口

# 集群网络插件,目前支持calico, flannel, kube-router, cilium

CLUSTER_NETWORK="calico"

# 服务网段 (Service CIDR),注意不要与内网已有网段冲突

SERVICE_CIDR="10.20.0.0/16"

# POD 网段 (Cluster CIDR),注意不要与内网已有网段冲突

CLUSTER_CIDR="172.32.0.0/16"

# 服务端口范围 (NodePort Range)

NODE_PORT_RANGE="30000-60000"

# kubernetes 服务 IP (预分配,一般是 SERVICE_CIDR 中第一个IP)

CLUSTER_KUBERNETES_SVC_IP="10.20.0.1"

# 集群 DNS 服务 IP (从 SERVICE_CIDR 中预分配)

CLUSTER_DNS_SVC_IP="10.20.254.254"

# 集群 DNS 域名

CLUSTER_DNS_DOMAIN="martinhe.local." #修改为公司名称

# 集群basic auth 使用的用户名和密码

BASIC_AUTH_USER="admin"

BASIC_AUTH_PASS="123456"

# ---------附加参数--------------------

#默认二进制文件目录

bin_dir="/usr/bin" #修改

#证书目录

ca_dir="/etc/kubernetes/ssl"

#部署目录,即 ansible 工作目录,建议不要修改

base_dir="/etc/ansible"

拷贝可执行文件

拷贝k8s-1.13.5.tar.gz到ansible目录,解压,内部文件直接到ansible/bin目录中。

开始按步骤部署:

批量修改内部证书内ST:“HangZhou”为“BeiJing”

# sed -i 's/HangZhou/BeiJing/g' `grep "HangZhou" /etc/ansible/roles/ -Rl`

环境初始化

[Thu Jul 11 12:50

root@k8s-master /etc/ansible]#pwd

/etc/ansible

# ansible-playbook 01.prepare.yml

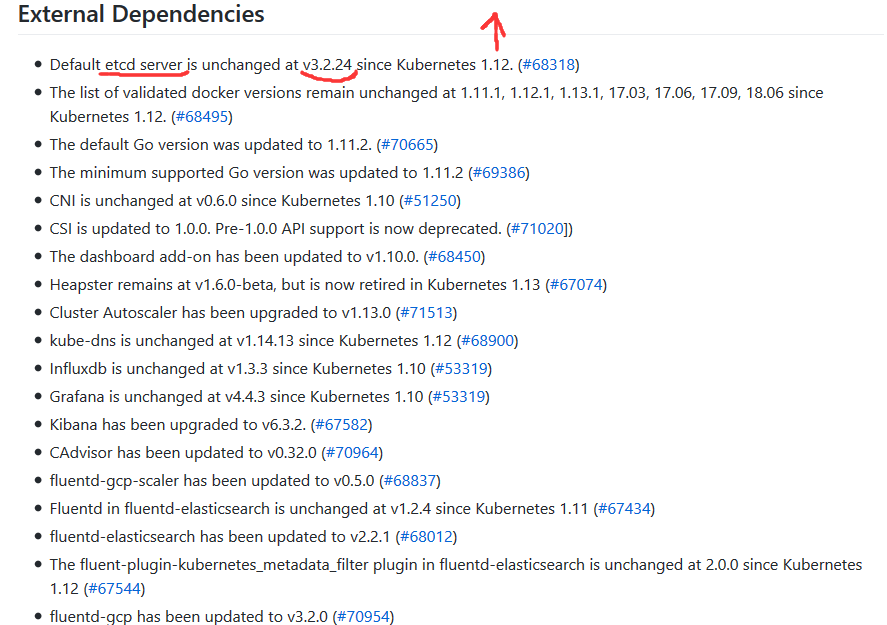

查看当前kuber依赖二进制执行程序版本。

https://github.com/kubernetes/kubernetes/blob/master/CHANGELOG-1.13.md#external-dependencies

更换etcd版本为3.2.24

# wget https://github.com/etcd-io/etcd/releases/download/v3.2.24/etcd-v3.2.24-linux-amd64.tar.gz

root@k8s-master /etc/ansible/bin]#tar xvf etcd-v3.2.24-linux-amd64.tar.gz

root@k8s-master /etc/ansible/bin]#cd etcd-v3.2.24-linux-amd64/

# mv etcd etcdctl ../

#./etcd --version

etcd Version: 3.2.24

Git SHA: 420a45226

Go Version: go1.8.7

Go OS/Arch: linux/amd64

部署etcd集群:

可选更改启动脚本路径

# ansible-playbook 02.etcd.yml

各etcd服务器验证etcd服务:

单台测试:

# export NODE_IPS="192.168.7.104"

# ETCDCTL_API=3 /usr/bin/etcdctl --endpoints=https://192.168.7.104:2379 --cacert=/etc/kubernetes/ssl/ca.pem --cert=/etc/etcd/ssl/etcd.pem --key=/etc/etcd/ssl/etcd-key.pem endpoint health

https://192.168.7.104:2379 is healthy: successfully committed proposal: took = 1.564869ms

多台测试:

# export NODE_IPS="192.168.7.104,XXX.XXX.XXX.XXX,XXX.XXX.XXX.XXX"

# for ip in ${NODE_IPS}; do ETCDCTL_API=3 /usr/bin/etcdctl --endpoints=https://${ip}:2379 --cacert=/etc/kubernetes/ssl/ca.pem --cert=/etc/etcd/ssl/etcd.pem --key=/etc/etcd/ssl/etcd-key.pem endpoint health; done

https://192.168.7.104:2379 is healthy: successfully committed proposal: took =

2.198515ms

https://XXX.XXX.XXX.XXX:2379 is healthy: successfully committed proposal: took =

2.457971ms

https://XXX.XXX.XXX.XXX:2379 is healthy: successfully committed proposal: took =

1.859514ms

部署docker:

可选更改启动脚本路径,但是docker已经提前安装,因此不需要重新执行

ansible# ansible-playbook 03.docker.yml

部署master服务:

可选更改启动脚本路径

ansible# ansible-playbook 04.kube-master.yml

部署node:

node节点必须安装docker

ansible# vim roles/kube-node/defaults/main.yml

# 基础容器镜像

SANDBOX_IMAGE: "harbor.martinhe.com/library/pause-amd64:3.1"

root@k8s-master1:/etc/ansible# ansible-playbook 05.kube-node.yml

部署网络服务calico:

可选更改calico服务启动脚本路径,csr证书信息

/etc/ansible]#vim roles/calico/templates/calico-v3.3.yaml.j2

修改内部上述文件镜像地址为本地或者阿里云仓库地址,本次以本地harbor为例。

第一个镜像:

/etc/ansible]#tar xvf calico-release-v3.3.6.tgz

/etc/ansible]#docker load < release-v3.3.6/images/calico-cni.tar

/etc/ansible]#docker tag b8eeeae14aa4 harbor.martinhe.com/library/cni:v3.3.6

/etc/ansible]#docker push harbor.martinhe.com/library/cni:v3.3.6

第二个镜像:

/etc/ansible]#docker load < release-v3.3.6/images/calico-node.tar

/etc/ansible]#docker tag ce902e610f51 harbor.martinhe.com/library/node:v3.3.6

/etc/ansible]#docker push harbor.martinhe.com/library/node:v3.3.6

第三个镜像:

/etc/ansible]#docker load < release-v3.3.6/images/calico-kube-controllers.tar

/etc/ansible]#docker tag 2fd138c9cb06 harbor.martinhe.com/library/kube-controllers:v3.3.6

/etc/ansible]#docker push harbor.martinhe.com/library/kube-controllers:v3.3.6

执行部署网络:

/etc/ansible]# ansible-playbook 06.network.yml

验证calico:

[Thu Jul 11 16:27

root@k8s-master /etc/ansible]#calicoctl node status

Calico process is running.

IPv4 BGP status

+---------------+-------------------+-------+----------+-------------+

| PEER ADDRESS | PEER TYPE | STATE | SINCE | INFO |

+---------------+-------------------+-------+----------+-------------+

| 192.168.7.105 | node-to-node mesh | up | 08:26:50 | Established |

+---------------+-------------------+-------+----------+-------------+

IPv6 BGP status

No IPv6 peers found.

添加node节点:

[kube-node]

192.168.7.105

[new-node] # 预留组,后续添加node节点使用

192.168.7.106

/etc/ansible# ansible-playbook 20.addnode.yml

添加master节点:

注释掉lb,否则无法下一步

[kube-master]

192.168.7.101

[new-master] # 预留组,后续添加master节点使用

192.168.7.107

/etc/ansible# ansible-playbook 21.addmaster.yml

验证当前状态:

[Thu Jul 11 16:33

root@k8s-master /etc/ansible]#calicoctl node status

Calico process is running.

IPv4 BGP status

+---------------+-------------------+-------+----------+-------------+

| PEER ADDRESS | PEER TYPE | STATE | SINCE | INFO |

+---------------+-------------------+-------+----------+-------------+

| 192.168.7.105 | node-to-node mesh | up | 08:26:51 | Established |

| 192.168.7.106 | node-to-node mesh | up | 08:33:24 | Established |

+---------------+-------------------+-------+----------+-------------+

IPv6 BGP status

No IPv6 peers found.

[Thu Jul 11 16:34

root@k8s-master /etc/ansible]#kubectl get nodes

NAME STATUS ROLES AGE VERSION

192.168.7.101 Ready,SchedulingDisabled master 37m v1.13.5

192.168.7.105 Ready node 38m v1.13.5

192.168.7.106 Ready node 3m57s v1.13.5

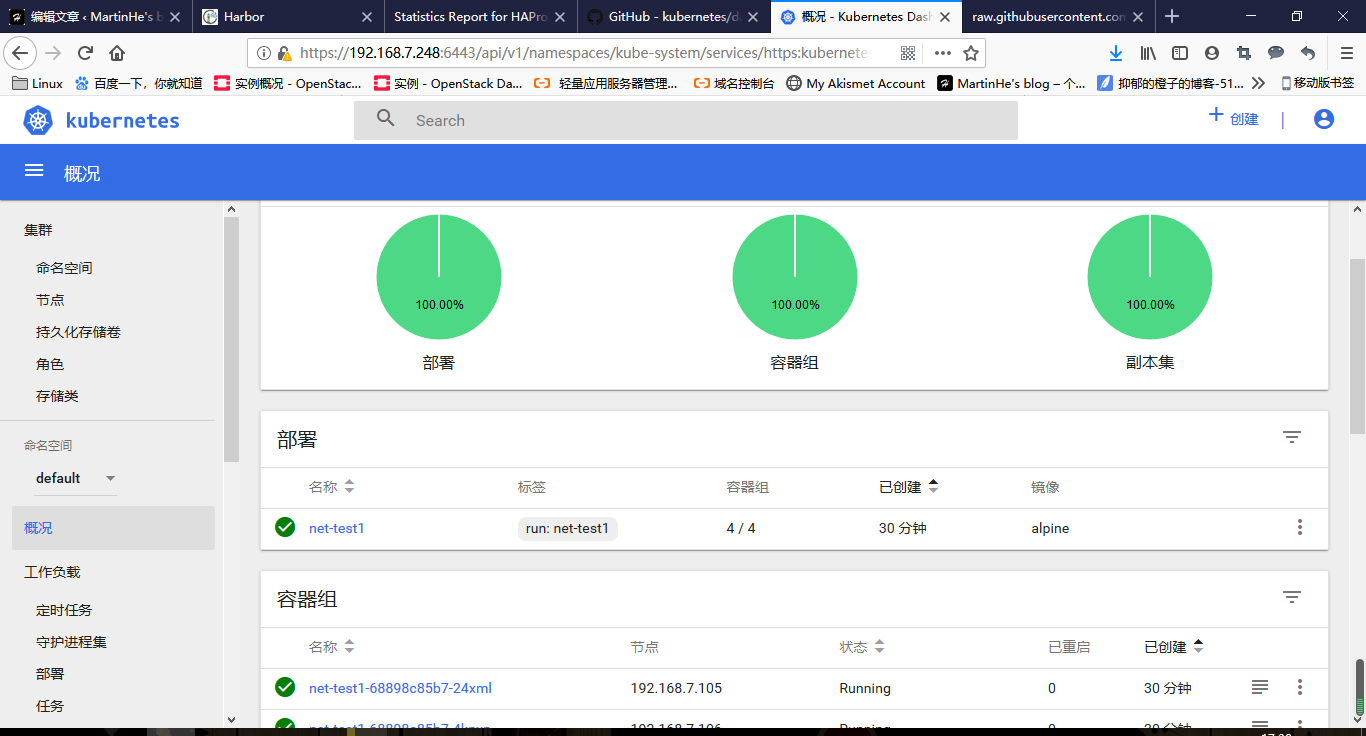

跑测试容器:

# docker pull alpine

# kubectl run net-test1 --image=alpine --replicas=4 sleep 360000

kubectl run --generator=deployment/apps.v1 is DEPRECATED and will be removed in a future version. Use kubectl run --generator=run-pod/v1 or kubectl create instead.

deployment.apps/net-test1 created

稍等片刻:

[Thu Jul 11 16:55

root@k8s-master /etc/ansible]#kubectl get pods

NAME READY STATUS RESTARTS AGE

net-test1-68898c85b7-24xml 1/1 Running 0 3m5s

net-test1-68898c85b7-4kpxn 1/1 Running 0 3m5s

net-test1-68898c85b7-9dqwd 1/1 Running 0 3m5s

net-test1-68898c85b7-vz472 1/1 Running 0 3m5s

查看pod详细信息

[Thu Jul 11 16:55

root@k8s-master /etc/ansible]#kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

net-test1-68898c85b7-24xml 1/1 Running 0 3m29s 172.32.227.194 192.168.7.105 <none> <none>

net-test1-68898c85b7-4kpxn 1/1 Running 0 3m29s 172.32.75.1 192.168.7.106 <none> <none>

net-test1-68898c85b7-9dqwd 1/1 Running 0 3m29s 172.32.75.2 192.168.7.106 <none> <none>

net-test1-68898c85b7-vz472 1/1 Running 0 3m29s 172.32.227.193 192.168.7.105 <none> <none>

进入其中一台pod测试网络连通性

[Thu Jul 11 16:57

root@k8s-master /etc/ansible]#kubectl exec -it net-test1-68898c85b7-vz472 sh

/ # ifconfig

eth0 Link encap:Ethernet HWaddr 66:DE:84:10:87:22

inet addr:172.32.227.193 Bcast:0.0.0.0 Mask:255.255.255.255

UP BROADCAST RUNNING MULTICAST MTU:1500 Metric:1

RX packets:12 errors:0 dropped:0 overruns:0 frame:0

TX packets:0 errors:0 dropped:0 overruns:0 carrier:0

collisions:0 txqueuelen:0

RX bytes:936 (936.0 B) TX bytes:0 (0.0 B)

/ # ping 172.32.75.1

PING 172.32.75.1 (172.32.75.1): 56 data bytes

64 bytes from 172.32.75.1: seq=0 ttl=62 time=3.809 ms

64 bytes from 172.32.75.1: seq=1 ttl=62 time=0.504 ms

64 bytes from 172.32.75.1: seq=2 ttl=62 time=0.534 ms

64 bytes from 172.32.75.1: seq=3 ttl=62 time=0.661 ms

^C

--- 172.32.75.1 ping statistics ---

4 packets transmitted, 4 packets received, 0% packet loss

round-trip min/avg/max = 0.504/1.377/3.809 ms

k8s应用环境:

dashboard(1.10.1)

部署kubernetes的web管理界面dashboard

/etc/ansible]# cd manifests/dashboard/

/etc/ansible/manifests/dashboard]# mkdir 1.10.1

/etc/ansible/manifests/dashboard]# cd 1.10.1/

/etc/ansible/manifests/dashboard/1.10.1]#cp ../*.yaml .

当前目录下有的文件

/etc/ansible/manifests/dashboard/1.10.1]#ll

total 32

drwxr-xr-x 2 root root 4096 Jul 11 17:13 ./

drwxr-xr-x 4 root root 4096 Jul 11 17:02 ../

-rw-r--r-- 1 root root 357 Jul 11 17:13 admin-user-sa-rbac.yaml

-rw-r--r-- 1 root root 4761 Jul 11 17:13 kubernetes-dashboard.yaml

-rw-r--r-- 1 root root 2223 Jul 11 17:13 read-user-sa-rbac.yaml

-rw-r--r-- 1 root root 458 Jul 11 17:13 ui-admin-rbac.yaml

-rw-r--r-- 1 root root 477 Jul 11 17:13 ui-read-rbac.yaml

修改kubernetes-dashboard.yaml内镜像拉取地址为本地

113 image: harbor.martinhe.com/library/kubernetes-dashboard-amd64:v1.10.1

下载dashboard镜像

# docker pull registry.cn-hangzhou.aliyuncs.com/google_containers/kubernetes-dashboard-amd64:v1.10.1

# docker tag f9aed6605b81 harbor.martinhe.com/library/kubernetes-dashboard-amd64:v1.10.1

# docker push harbor.martinhe.com/library/kubernetes-dashboard-amd64:v1.10.1

通过当前目录下的yaml内容生成配置文件:

/etc/ansible/manifests/dashboard/1.10.1]#kubectl create -f .

查看是否创建dashboard容器

# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

calico-kube-controllers-6b87bdc87d-q2b7x 1/1 Running 0 53m

calico-node-8rt62 2/2 Running 0 53m

calico-node-gzd5l 2/2 Running 0 47m

calico-node-jbqjr 2/2 Running 0 53m

kubernetes-dashboard-76bc8997c7-pgpw6 1/1 Running 0 89s

查看dashboard容器拿到的service地址

# kubectl get service -n kube-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes-dashboard NodePort 10.20.78.128 <none> 443:56811/TCP 2m59s

# kubectl cluster-info

Kubernetes master is running at https://192.168.7.248:6443

kubernetes-dashboard is running at https://192.168.7.248:6443/api/v1/namespaces/kube-system/services/https:kubernetes-dashboard:/proxy

token方式登录dashboardtoken方式登录dashboard

# kubectl -n kube-system get secret | grep admin-user

admin-user-token-f492k kubernetes.io/service-account-token 3 7m23s

# kubectl -n kube-system describe secret admin-user-token-f492k

token: eyJhbGciOiJSUzI1NiIsImtpZCI6IiJ9.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJhZG1pbi11c2VyLXRva2VuLWY0OTJrIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQubmFtZSI6ImFkbWluLXVzZXIiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC51aWQiOiJlOTY0NTJkYS1hM2JjLTExZTktYWZiZS0wMDUwNTYzNTE3NzEiLCJzdWIiOiJzeXN0ZW06c2VydmljZWFjY291bnQ6a3ViZS1zeXN0ZW06YWRtaW4tdXNlciJ9.QlLoDmy94Ey4wcnuFzYBSj1BiayOU2TXNsTyjbXy2lvEAjK8WsRjr0TMuIXN9GHo1zpuUEhUlsV1h72fdJRqfpzIt5aUfWbg4uc-oGQoGFhXyW3l9mZQTwY1AwwTsfuOl9yrGFAkh9KNG5jWppCWFzwghEuJJUxOULLNCWlDMFeJhboUqxpzyh_54WR-V4UiEd_9cxP63CibQbw99fJ6ZGdmCIYNAkxsYjafGcwNdFDBoTRfREUb8zfjexODbU-dWjKCU27ZccL0s46lL9E7asoVNo5KVL8YcJYVFy5EjN4s0aB76Ff40SSFhfEZ8qW1_BMJYHbJC-HTNy-_JvpHtw

测试登录:

Kubeconfig登录

制作Kubeconfig文件,拷贝给需要登录人员。

# cp /root/.kube/config /opt/kubeconfig

# vim /opt/kubeconfig #在最后添加token信息,注意格式

token: eyJhbGciOiJSUzI1NiIsImtpZCI6IiJ9.eyJpc3MiOiJrdWJlcm5ldGVzL3NlcnZpY2VhY2NvdW50Iiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9uYW1lc3BhY2UiOiJrdWJlLXN5c3RlbSIsImt1YmVybmV0ZXMuaW8vc2VydmljZWFjY291bnQvc2VjcmV0Lm5hbWUiOiJhZG1pbi11c2VyLXRva2VuLWdzNXJkIiwia3ViZXJuZXRlcy5pby9zZXJ2aWNlYWNjb3VudC9zZXJ2aWNlLWFjY291bnQubmFtZSI6ImFkbWluLXVzZXIiLCJrdWJlcm5ldGVzLmlvL3NlcnZpY2VhY2NvdW50L3NlcnZpY2UtYWNjb3VudC51aWQiOiJmMzhjNWZhYy1hM2ViLTExZTktYWZiZS0wMDUwNTYzNTE3NzEiLCJzdWIiOiJzeXN0ZW06c2VydmljZWFjY291bnQ6a3ViZS1zeXN0ZW06YWRtaW4tdXNlciJ9.LWArw8mB_urwLbbIqjuz_gZ0ZpwaBTk_koBYtIru8rqQ5e2QsI_tnKpeVOMo0inDjDg2MDkMj1FEOi_eknw9-hv8z-y0HOx8SLfQ0z6EAT6PmBadwQJ7Zj0JYnRs0tHLEYQ33mHt6tvzgPnxXrIed4_rhj9-2EwYJnKT4qkrYUBe4LPJxQHaHGYs9wSA2WTDXga0CRobszydGjI93bYBeXGvLAgF_9LA0uGUth0Ew2K2frp1z2scjqxFUHNiIhHe5zIei5DjK1qUi6YEdXtFfxYoueAL4cTIUX92LzjrO937BBhUjll65qL6Im1cHYUOUiD94n7SI2WliS7heu7ng

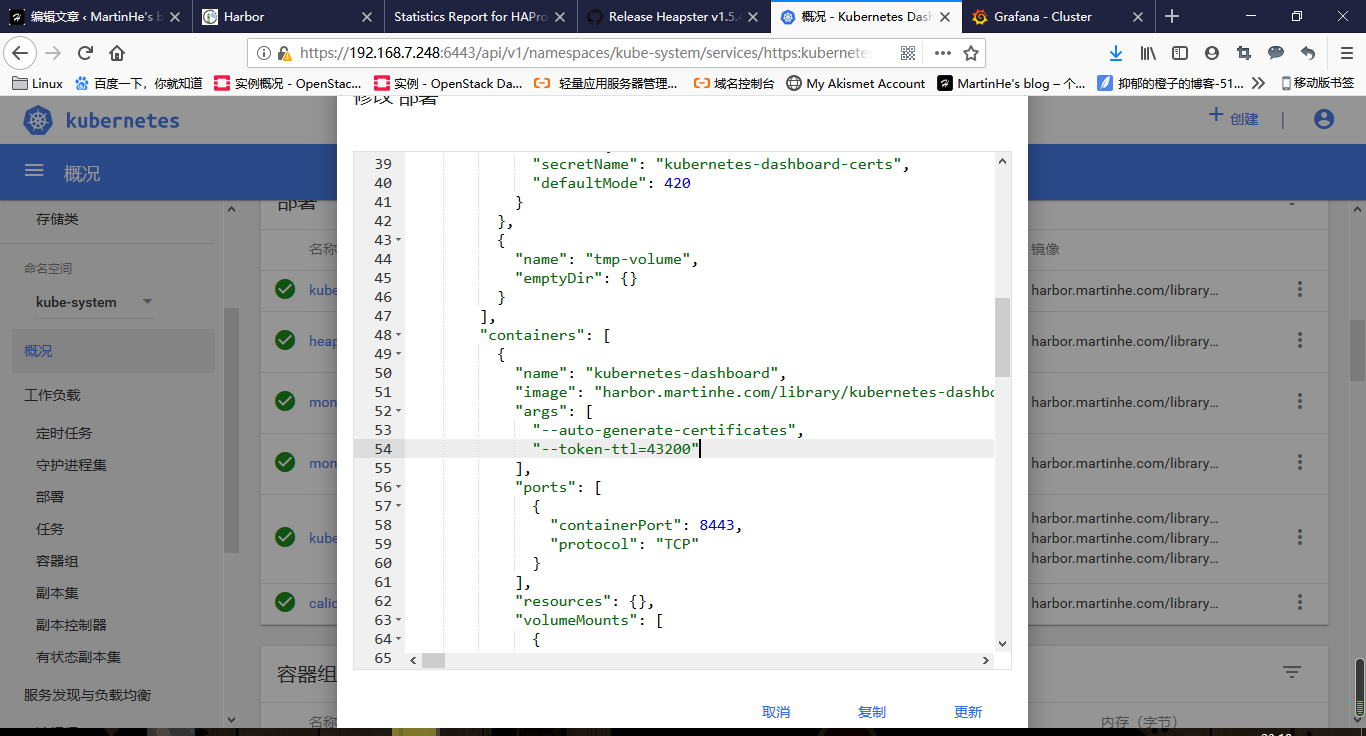

设置token登录会话保持时间

设置超时时长为43200秒

# vim dashboard/kubernetes-dashboard.yaml

image: harbor.martinhe.com/library/kubernetes-dashboard-amd64:v1.10.1

ports:

- containerPort: 8443

protocol: TCP

args:

- --auto-generate-certificates

- --token-ttl=43200

使更改的内容生效,创建服务时也使用下列命令,即可在文件内容发生改变时,使用此命令即可使更改内容生效,佛则需要删除服务重建。:

# kubectl apply -f .

session保持:

DNS服务:

目前常用的dns组件有kube-dns和coredns两个

部署kube-dns

源码包下载地址:

kubernetes1.13.8下载:

https://dl.k8s.io/v1.13.8/kubernetes.tar.gz

1.13.8客户端下载

https://dl.k8s.io/v1.13.8/kubernetes-client-linux-amd64.tar.gz

1.13.8服务端下载

https://dl.k8s.io/v1.13.8/kubernetes-server-linux-amd64.tar.gz

1.13.8 node端下载

https://dl.k8s.io/v1.13.8/kubernetes-node-linux-amd64.tar.gz

上述的四个压缩包中,全部解压缩,有下列kube-dns.yaml文件。

ll kubernetes/cluster/addons/dns/

coredns/

kube-dns/

nodelocaldns/

OWNERS

vim kubernetes/cluster/addons/dns/kube-dns/kube-dns.yaml.base

进行相应位置修改:需要修改的地方官方已经使用大写字母填写了

创建dns用目录

[Thu Jul 11 19:11

root@k8s-master /etc/ansible/manifests]#mkdir dns/{kube-dns,coredns} -pv

mkdir: created directory 'dns'

mkdir: created directory 'dns/kube-dns'

mkdir: created directory 'dns/coredns'

/etc/ansible/manifests]#cd dns/kube-dns/

拷贝kube-dns镜像到当前目录:

/etc/ansible/manifests/dns/kube-dns]#ll

total 136992

-rw-r--r-- 1 root root 3983872 Jul 10 17:38 busybox-online.tar.gz

-rw-r--r-- 1 root root 289 Jul 10 17:40 busybox.yaml

-rw-r--r-- 1 root root 41687040 Jul 10 17:38 k8s-dns-dnsmasq-nanny-amd64_1.14.13.tar.gz

dns-dnsmasq:提供DNS缓存,降低kubedns负载,提高性能

-rw-r--r-- 1 root root 51441152 Jul 10 17:38 k8s-dns-kube-dns-amd64_1.14.13.tar.gz

kube-dns:提供service name域名的解析

-rw-r--r-- 1 root root 43140608 Jul 10 17:38 k8s-dns-sidecar-amd64_1.14.13.tar.gz

dns-sidecar:定期检查kubedns和dnsmasq的健康状态

-rw-r--r-- 1 root root 6360 Jul 10 17:56 kube-dns.yaml

打标签并上传镜像到本地harbor

上传busybox镜像

/etc/ansible/manifests/dns/kube-dns#docker load < busybox-online.tar.gz

/etc/ansible/manifests/dns/kube-dns#docker tag 747e1d7f6665 harbor.martinhe.com/library/busybox:latest

/etc/ansible/manifests/dns/kube-dns#docker push harbor.martinhe.com/library/busybox:latest

上传k8s-dns-dnsmasq-nanny-amd64_1.14.13.tar.gz镜像

#docker load < k8s-dns-dnsmasq-nanny-amd64_1.14.13.tar.gz

#docker tag 7b15476a7228 harbor.martinhe.com/library/k8s-dns-dnsmasq-nanny-amd64:1.14.13

#docker push harbor.martinhe.com/library/k8s-dns-dnsmasq-nanny-amd64:1.14.13

k8s-dns-kube-dns-amd64_1.14.13.tar.gz

#docker load -i k8s-dns-kube-dns-amd64_1.14.13.tar.gz

#docker tag 82f954458b31 harbor.martinhe.com/library/k8s-dns-kube-dns-amd64:1.14.13

#docker push harbor.martinhe.com/library/k8s-dns-kube-dns-amd64:1.14.13

上传k8s-dns-sidecar-amd64_1.14.13.tar.gz镜像

#docker load < k8s-dns-sidecar-amd64_1.14.13.tar.gz

#docker tag 333fb0833870 harbor.martinhe.com/library/k8s-dns-sidecar-amd64:1.14.13

#docker push harbor.martinhe.com/library/k8s-dns-sidecar-amd64:1.14.13

编辑当前目录下的busybox.yaml文件,镜像位置指向本地harbor

/etc/ansible/manifests/dns/kube-dns]#vim busybox.yaml

apiVersion: v1

kind: Pod

metadata:

name: busybox

namespace: default #default namespace的DNS

spec:

containers:

- image: harbor.martinhe.com/library/busybox:latest

command:

- sleep

- "3600"

imagePullPolicy: Always

name: busybox

restartPolicy: Always

创建busybos测试容器

# kubectl create -f busybox.yaml

pod/busybox created

验证当前pod列表

root@k8s-master /etc/ansible/manifests/dns/kube-dns]#kubectl get pods

NAME READY STATUS RESTARTS AGE

busybox 1/1 Running 0 23s

修改当前目录下的kube-dns.yaml文件,修改如下:内部的cpu资源和内存资源分配需修改,通常内存改为4G,cpu软限制1核,硬限制2核,过小会导致DNS解析时间过长。

33 clusterIP: 10.20.254.254 #将dns指向之前配置的dns地址

102 image: harbor.martinhe.com/library/k8s-dns-kube-dns-amd64:1.14.13

132 - --domain=martinhe.local. #同样指向之前配置的域名

153 image: harbor.martinhe.com/library/k8s-dns-dnsmasq-nanny-amd64:1.14.13

174 - --server=/magedu.net/172.20.100.23#53 #添加本地dns,可写可不写

175 - --server=/martinhe.local/127.0.0.1#10053

194 image: harbor.martinhe.com/library/k8s-dns-sidecar-amd64:1.14.13

207 - --probe=kubedns,127.0.0.1:10053,kubernetes.default.svc.martinhe.local,5,SRV

208 - --probe=dnsmasq,127.0.0.1:53,kubernetes.default.svc.martinhe.local,5,SRV

创建服务并验证dns::

[Thu Jul 11 20:12

root@k8s-master /etc/ansible/manifests/dns/kube-dns]#kubectl create -f kube-dns.yaml

service/kube-dns created

serviceaccount/kube-dns created

configmap/kube-dns created

deployment.extensions/kube-dns created

出现kube-dns-7ff6498ffb-4btzc容器:

[Thu Jul 11 20:12

root@k8s-master /etc/ansible/manifests/dns/kube-dns]#kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

calico-kube-controllers-6b87bdc87d-q2b7x 1/1 Running 0 3h47m

calico-node-8rt62 2/2 Running 0 3h47m

calico-node-gzd5l 2/2 Running 0 3h41m

calico-node-jbqjr 2/2 Running 0 3h47m

kube-dns-7ff6498ffb-4btzc 3/3 Running 0 84s

kubernetes-dashboard-76bc8997c7-qnkcs 1/1 Running 0 71m

解析DNS成功

root@k8s-master /etc/ansible/manifests/dns/kube-dns]#kubectl exec busybox nslookup kubernetes

Server: 10.20.254.254

Address 1: 10.20.254.254 kube-dns.kube-system.svc.martinhe.local

Name: kubernetes

Address 1: 10.20.0.1 kubernetes.default.svc.martinhe.local

DNS全称解析:kubernetes.default.svc.martinhe.local

服务名称.namespace名称.svc.域名

部署监控组件heapster:

- heapster:数据采集

- influxdb:数据存储

- grafana:web展示

以下用到的文件可在/etc/ansible/kubernetes/cluster/addons/cluster-monitoring/下找到

cd kubernetes/cluster/addons/cluster-monitoring/下找到

/etc/ansible/manifests/dns/kube-dns]#mkdir heapster

/etc/ansible/manifests/dns/kube-dns]#cd heapster/

/etc/ansible/manifests/dns/kube-dns/heapster]#ll

-rw-r--r-- 1 root root 2158 Jul 10 18:07 grafana.yaml

-rw-r--r-- 1 root root 75343360 Jul 10 17:38 heapster-amd64_v1.5.1.tar

-rw-r--r-- 1 root root 154731520 Jul 10 17:39 heapster-grafana-amd64-v4.4.3.tar

-rw-r--r-- 1 root root 12782080 Jul 10 17:39 heapster-influxdb-amd64_v1.3.3.tar

-rw-r--r-- 1 root root 1389 Jul 10 18:08 heapster.yaml

-rw-r--r-- 1 root root 979 Jul 10 18:10 influxdb.yaml

1. 导入相应的镜像

#docker load < heapster-amd64_v1.5.1.tar

#docker tag 4129aa919411 harbor.martinhe.com/library/heapster-amd64:v1.5.1

#docker push harbor.martinhe.com/library/heapster-amd64:v1.5.1

#docker load < heapster-grafana-amd64-v4.4.3.tar

#docker tag 8cb3de219af7 harbor.martinhe.com/library/heapster-grafana-amd64:v4.4.3

#docker push harbor.martinhe.com/library/heapster-grafana-amd64:v4.4.3

#docker load -i heapster-influxdb-amd64_v1.3.3.tar

#docker tag 1315f002663c harbor.martinhe.com/library/heapster-influxdb-amd64:v1.3.3

#docker push harbor.martinhe.com/library/heapster-influxdb-amd64:v1.3.3

2. 更改yaml中的镜像地址

修改heapster.yaml文件

/etc/ansible/manifests/dns/kube-dns/heapster]#vim heapster.yaml

39 image: harbor.martinhe.com/library/heapster-amd64:v1.5.1

修改grafana.yaml文件

/etc/ansible/manifests/dns/kube-dns/heapster]#vim grafana.yaml

17 image: harbor.martinhe.com/library/heapster-grafana-amd64:v4.4.3

修改influxdb.yaml文件

/etc/ansible/manifests/dns/kube-dns/heapster]#vim influxdb.yaml

16 image: harbor.martinhe.com/library/heapster-influxdb-amd64:v1.3.3

3. 创建服务

/etc/ansible/manifests/dns/kube-dns/heapster]#kubectl create -f .

deployment.extensions/monitoring-grafana created

service/monitoring-grafana created

serviceaccount/heapster created

clusterrolebinding.rbac.authorization.k8s.io/heapster created

deployment.extensions/heapster created

service/heapster created

deployment.extensions/monitoring-influxdb created

service/monitoring-influxdb created

查看3个服务heapster、monitoring-grafana、monitoring-influxdb是否创建成功

root@k8s-master /etc/ansible/manifests/dns/kube-dns/heapster]#kubectl get pod -n kube-system

NAME READY STATUS RESTARTS AGE

calico-kube-controllers-6b87bdc87d-q2b7x 1/1 Running 0 5h50m

calico-node-8rt62 2/2 Running 0 5h50m

calico-node-gzd5l 2/2 Running 0 5h43m

calico-node-jbqjr 2/2 Running 0 5h50m

heapster-697c8c8655-wdvh9 1/1 Running 0 45s

kube-dns-7ff6498ffb-4btzc 3/3 Running 0 124m

kubernetes-dashboard-76bc8997c7-qnkcs 1/1 Running 0 3h14m

monitoring-grafana-7554598698-z6nhx 1/1 Running 0 46s

monitoring-influxdb-5b7776b86f-pkm4z 1/1 Running 0 46s