一,基础环境准备

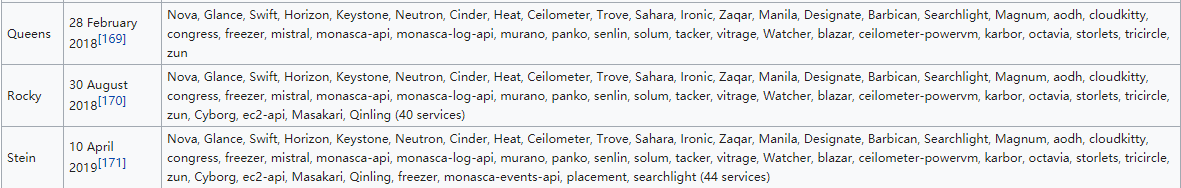

服务器(虚拟机)配置:

虚拟机配置:

虚拟机目前选择centos_mini1511.iso(7.2版),后续新版待测,磁盘分区100G,每台虚拟机配4块网卡,其中前两块网卡使用仅主机模式,其余两块使用NAT模式,做绑定(阔带宽或者主备),cpu双核心+,必须勾选虚拟化选项开启虚拟化功能,否则后期无法启动openstack云主机,内存more than 3G+,it will be better.

重命名网卡

- 在安装系统启动界面,进入Edit模式,在内核参数最后输入重命名网卡参数:

net.ifnames=0 biosdevname=0 - 回车进入安装系统。

推荐分区

- ‘boot’分区512M即可,平时大概用200M左右。

- ‘/’分区占剩余分区。

- ‘swap’分区可有可无,不推荐使用swap分区,最好增加内存条,或着用ssd盘分swap分区,会比机械盘性能好很多。

选择所在地时区,最小化安装,并开始安装系统

更改主机名称

- 修改/etc/hostname,或者

hostnamectl set-hostname

各服务器修改host文件

- 解析每个主机的IP地址和主机名对应关系,后续方便使用域名解析对应主机IP地址。也可通过内网DNS主机添加记录进行解析。

关闭防火墙和SeLinux和NetworkManager服务

网卡绑定配置

仅主机网络配置

bond0配置

[root@vm1-controller1 network-scripts]# vim ifcfg-bond0

BOOTPROTO=none

NAME=bond0

DEVICE=bond0

TYPE=Bond

BONDING_MASTER=YES

ONBOOT=YES

BONDING_OPTS="mode=1 miimon=100" #指定绑定类型为 1 及链路状态监测间隔时间

IPADDR=172.16.36.101

PREFIX=16

eth0配置

[root@vm1-controller1 network-scripts]# vim ifcfg-eth0

IPV6INIT="yes"

IPV6_AUTOCONF="yes"

BOOTPROTO="none"

DEVICE="eth0"

ONBOOT="yes"

MASTER=bond0

USERCTL=no #禁止非root用户修改

SLAVE=yes

eth1配置

[root@vm1-controller1 network-scripts]# vim ifcfg-eth1

IPV6INIT="yes"

IPV6_AUTOCONF="yes"

BOOTPROTO="none"

DEVICE="eth1"

ONBOOT="yes"

MASTER=bond0

USERCTL=no #禁止非root用户修改

SLAVE=yes

NAT网络配置

bond1配置

[root@vm1-controller1 network-scripts]# vim ifcfg-bond1

BOOTPROTO=none

NAME=bond1

DEVICE=bond1

TYPE=Bond

BONDING_MASTER=YES

ONBOOT=YES

BONDING_OPTS="mode=1 miimon=100" #指定绑定类型为 1 及链路状态监测间隔时间

IPADDR=192.168.7.101

PREFIX=21

GATEWAY=192.168.7.254

DNS1=202.106.0.20

eth2配置

[root@vm1-controller1 network-scripts]# vim ifcfg-eth2

IPV6INIT="yes"

IPV6_AUTOCONF="yes"

BOOTPROTO="none"

DEVICE="eth2"

ONBOOT="yes"

MASTER=bond1

USERCTL=no #禁止非root用户修改

SLAVE=yes

eth3配置

[root@vm1-controller1 network-scripts]# vim ifcfg-eth3

IPV6INIT="yes"

IPV6_AUTOCONF="yes"

BOOTPROTO="none"

DEVICE="eth3"

ONBOOT="yes"

MASTER=bond1

USERCTL=no #禁止非root用户修改

SLAVE=yes

各服务器重新配置yum源

安装opentos完成后,再添加epel源,否则会引起openstack软件不兼容等影响。

安装常用基础命令

[root@vm1-controller1 ~]# yum install vim iotop bc gcc gcc-c++ glibc glibc-devel pcre \

pcre-devel openssl openssl-devel zip unzip zlib-devel net-tools \

lrzsz tree ntpdate telnet lsof tcpdump wget libevent libevent-devel \

bc systemd-devel bash-completion traceroute -y

各服务器时间同步

时间必须同步,佛则最后创建虚拟机可能会出现各种问题。

[root@vm1-controller1 ~]# cp /usr/share/zoneinfo/Asia/Shanghai /etc/localtime

[root@vm1-controller1 ~]# ntpdate time3.aliyun.com && hwclock -w

可定制自动执行任务计划:

[root@vm1-controller1 ~]# echo "*/5 * * * * /usr/sbin/ntpdate \

time3.aliyun.com && /usr/sbin/hwclock -w" > /var/spool/cron/root

[root@vm1-controller1 ~]# systemctl restart crond.service

系统内核参数优化和连接数优化设置:

- /etc/sysctl.conf

- /etc/security/limits.conf

关机做快照

禁止执行yum update命令.

二:Openstack环境准备

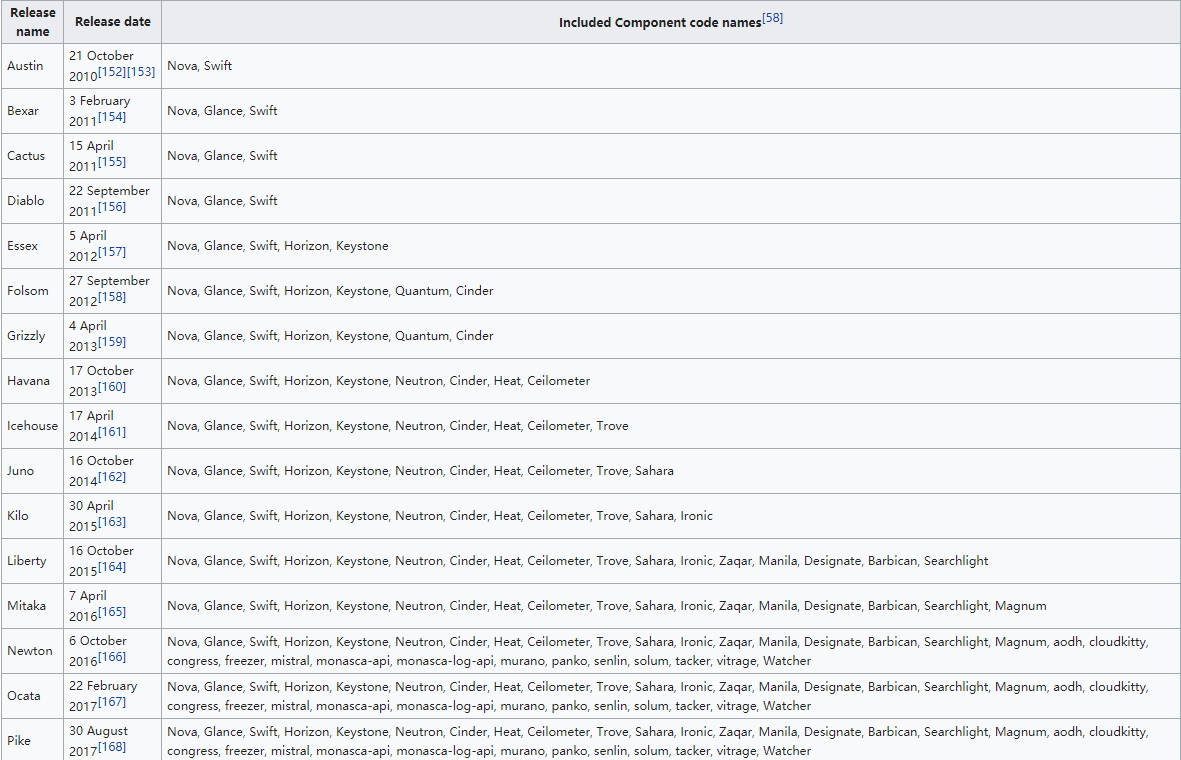

Openstack各版本发展历程:

- https://en.wikipedia.org/wiki/OpenStack#Release_history

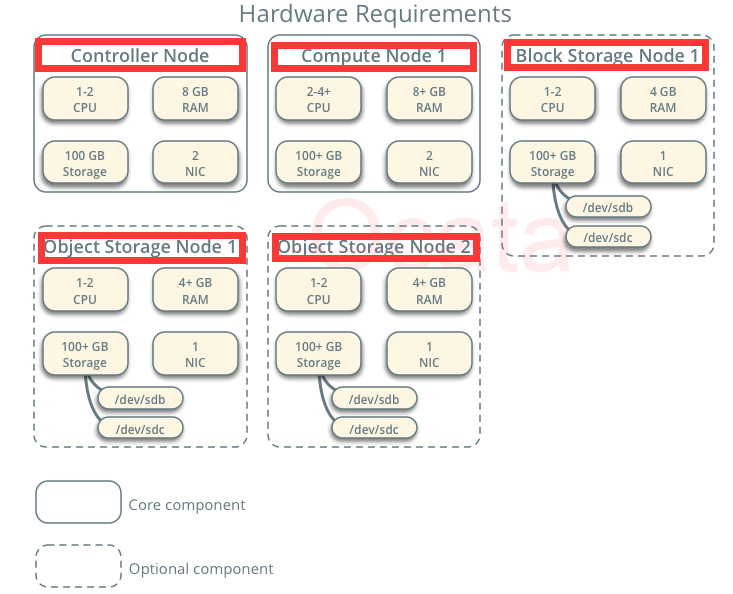

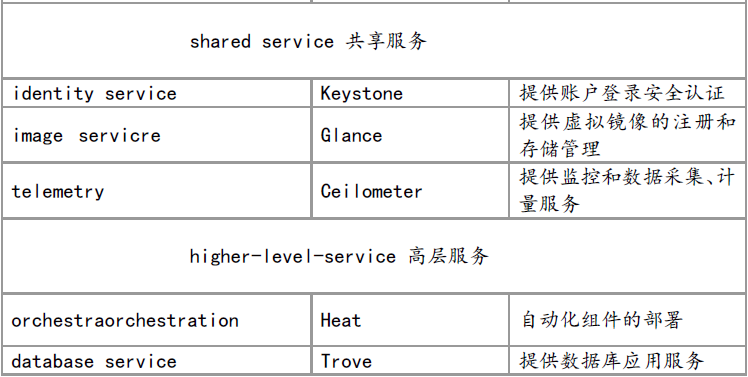

各组件的功能:

Ocata版本

- https://docs.openstack.org/ocata/zh_CN/install-guide-rdo/index.html

安装openstack基础组件准备

- Alpha:是内部测试版 一般不向外部发布 ,通常只在软件开发者内部交流,该版本软件的 Bug较多,需要继续修改。

- Dev:在软件开发中多用于开发软件的代号,相比于 beta 版本, dev 版本可能出现的更早,甚至还没有发布。这也就意味着, dev 版本的软件通常比 beta 版本的软件更不稳定

- Beta:也是测试版,这个阶段的版本会一直加入新的功能。在 Alpha 版之后推出。

- RC:(Release Candidate) 就是发行候选版本 ,RC 版不会再加入新的功能了,主要着重于除错。

- GA:General Availability,正式发布的版本。

- Release: 该版本意味“最终版本”,在前面版本的一系列测试版之后,终归会有一个正式版本,是最终交付用户使用的一个版本。该版本有时也称为标准版。

查看openstack yum版本

[root@vm1-controller1 ~]# yum list centos-release-openstack*

各服务器安装ocata的yum源:

负载服务服务器除外

[root@vm1-controller1 ~]# yum install -y centos-release-openstack-ocata.noarch

各服务器安装opentstack客户端:即控制端和计算节点

[root@vm1-controller1 ~]# yum install python-openstackclient -y

各服务器安装opentstack SELinux管理包:(若不开启SELinux,可不装此包)

如果agent开启了selinux会自动进行selinux权限的相关设置:

[root@vm1-controller1 ~]# yum install openstack-selinux -y

安装数据库服务:

可以单独安装至其他服务器,openstack的各组件都要使用数据库保存数据,除了nova使用API 与其他组件进行调用之外:

MySQL官方下载地址: https://dev.mysql.com/downloads/

控制端安python连接SQL模块

用于控制端连接数据库

[root@vm1-controller1 ~]# yum install mariadb python2-PyMySQL -y

单独服务器安装mariadb

[root@vm3-mysql-membercache ~]# yum install mariadb mariadb-server -y

配置数据库:

[root@vm3-mysql-membercache ~]# vim /etc/my.cnf.d/openstack.cnf

[mysqld]

bind-address = 0.0.0.0

default-storage-engine = innodb

innodb_file_per_table = on

max_connections = 4096

collation-server = utf8_general_ci

character-set-server = utf8

启动服务并设置开机自启:

[root@vm3-mysql-membercache ~]# systemctl start mariadb && \

systemctl enable mariadb

安全加固数据库:

[root@vm3-mysql-membercache ~]# mysql_secure_installation

配置my.cnf:不适用于Mariadb:10.1.20(或许下列格式错误)

[mysqld]

socket=/var/lib/mysql/mysql.sock

user=mysql

symbolic-links=0

datadir=/data/mysql

innodb_file_per_table=1

#skip-grant tables

relay-log=/data/mysql

server-id=10

log-error=/data/mysql-log/mysql_error.txt

log-bin=/data/mysql-binlog/master-log

#general_log=ON

#general_log_file=/data/general_mysql.log

long_query_time=5

slow_query_log=1

slow_query_log_file=/data/mysql

slow_query_log_file=/data/mysql-log/slow_mysql.txt

max_connections=1000

bind-address=172.16.36.103

[client]

port=3306

socket=/var/lib/mysql/mysql.sock

[mysqld_safe]

log-error=/data/mysql-log/mysqld-safe.log

pid-file=/var/lib/mysql/mysql.sock

创建相关数据库使用文件夹:

[root@vm3-mysql-membercache ~]# mkdir -pv /data/{mysql,mysql-log,mysql-binlog}

mkdir: created directory ‘/data’

mkdir: created directory ‘/data/mysql’

mkdir: created directory ‘/data/mysql-log’

mkdir: created directory ‘/data/mysql-binlog’

安装rabbitMQ服务器:

rabbitMQ集群配置方案查看studylinux。

各组件通过消息发送与接收是实现组件之间的通信:可单独安装或结合membercache服务器安装

[root@vm4-rabbitmq ~]# yum install rabbitmq-server -y

启动服务并设置开机自启:

[root@vm4-rabbitmq ~]# systemctl enable rabbitmq-server && \

systemctl start rabbitmq-server

添加openstack用户:

[root@vm4-rabbitmq ~]# rabbitmqctl add_user openstack 123456

Creating user "openstack" ...

给openstack用户配置写和读权限:

[root@vm4-rabbitmq ~]# rabbitmqctl set_permissions openstack ".*" ".*" ".*"

Setting permissions for user "openstack" in vhost "/" ...

打开rabbitMQ的web插件:

[root@vm4-rabbitmq ~]# rabbitmq-plugins enable rabbitmq_management

查看插件

[root@vm4-rabbitmq ~]# rabbitmq-plugins list

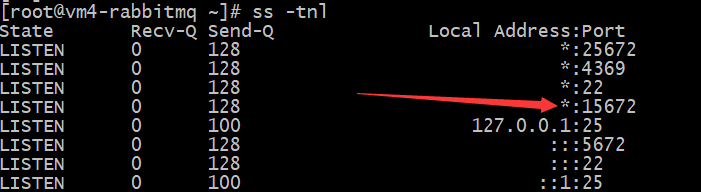

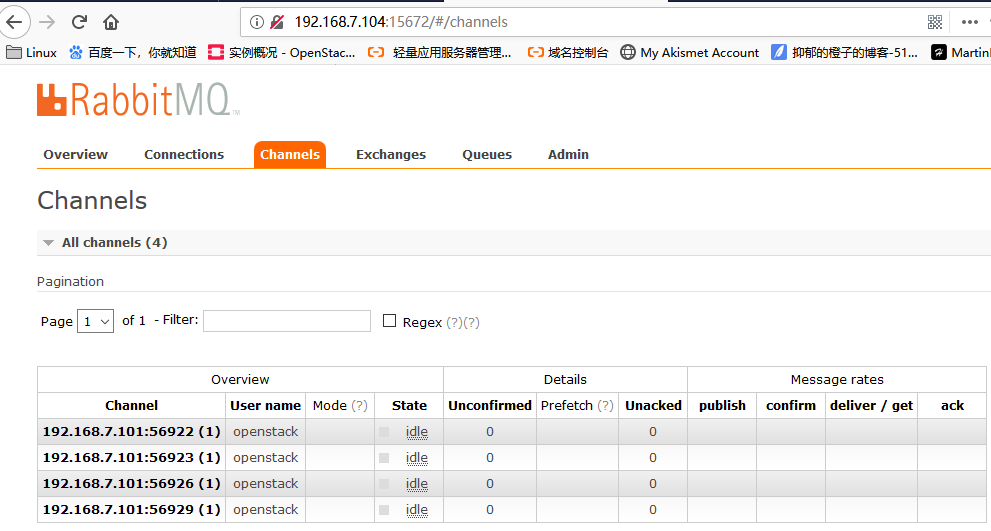

访问rabbitMQ的web界面:

默认用户名密码都是guest ,可以更改 web 访问端口为 15672

http://172.16.36.104:15672

安装Memcached服务器:通常与控制端装一起,此处分开python-memchched装在控制端

用于缓存 openstack 各服务的身份认证令牌信息

[root@vm3-mysql-memcached ~]# yum install memcached -y

[root@vm1-controller1 ~]# yum install python-memcached -y

编辑配置文件:

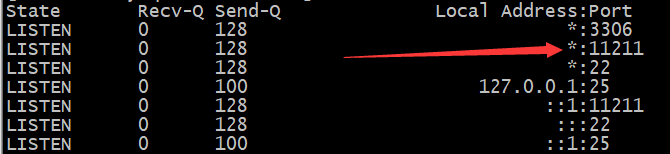

[root@vm3-mysql-memcached ~]# vim /etc/sysconfig/memcached

PORT="11211"

USER="memcached"

MAXCONN="4096"

CACHESIZE="1024"

OPTIONS="-l 0.0.0.0,::1"

启动服务并设置开机自启:

[root@vm3-mysql-memcached ~]# systemctl enable memcached && \

systemctl start memcached

查看启动端口:

三、部署认证服务keystone

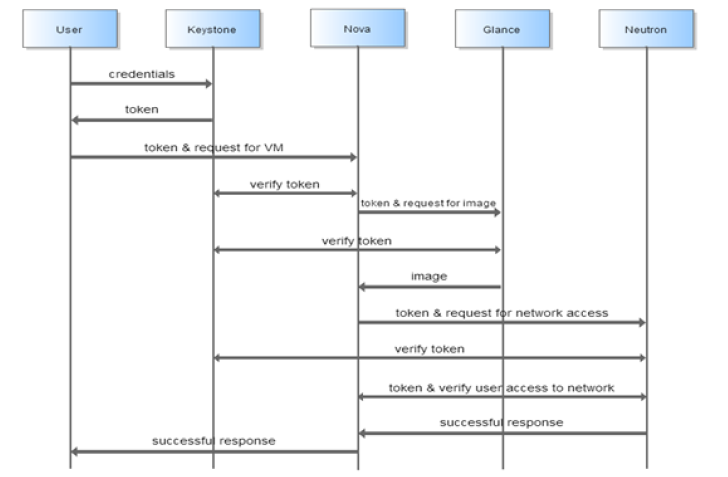

keystone简介:

Keystone中主要涉及到如下几个概念:User、Tenant、Role、Token:

- User:使用 openstack 的用户 。

- Tenant:租户 可以理解为一个人、项目或者组织拥有的资源的合集。在一个租户中可以拥有很多个用户,这些用户可以根据权限的划分使用租户中的资源。

- Role:角色,用于分配操作的权限。角色可以被指定给用户,使得该 用户获得角色对应的操作权限。

- Token:指的是一串比特值或者字符串,用来作为访问资源的记号。 Token 中含有可访问资源的范围和有效时间。

keystone数据库配置

#创建keystone数据库:

MariaDB [(none)]> CREATE DATABASE keystone;

#对keystone数据库授予恰当的权限:

MariaDB [(none)]> GRANT ALL PRIVILEGES ON keystone.* TO 'keystone'@'%' \

IDENTIFIED BY 'keystone123';

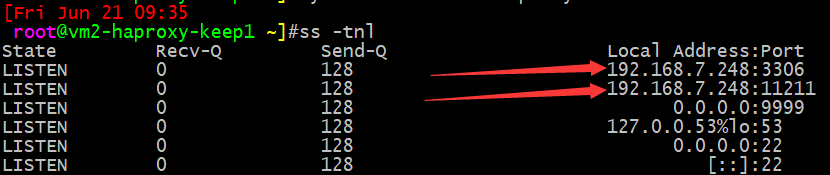

配置haproxy代理:

listen mariadb_port_3306

bind 192.168.7.248:3306

mode tcp

log global

server 172.16.36.103 172.16.36.103:3306 check inter 3000 fall 2 rise 5

listen memcached_port_11211

bind 192.168.7.248:11211

mode tcp

log global

server 172.16.36.103 172.16.36.103:11211 check inter 3000 fall 2 rise 5

验证端口:

控制端主机hosts文件添加haproxy域名和VIP地址记录解析。

[root@vm1-controller1 ~]# vim /etc/hosts

192.168.7.248 vm2-haproxy-keep1.martin.com

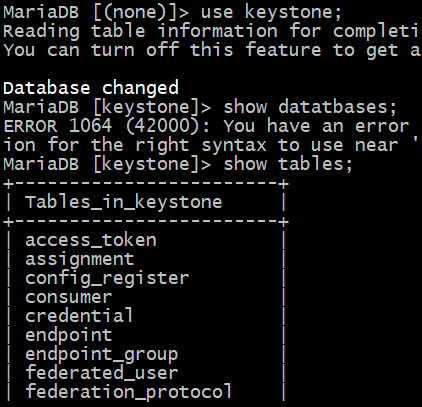

验证数据库连接:

在控制端连接使用keystone账户连接mysql。

[root@vm1-controller1 ~]# mysql -ukeystone -hvm2-haproxy-keep1.martin.com -pkeystone123

Welcome to the MariaDB monitor. Commands end with ; or \g.

Your MariaDB connection id is 43

Server version: 10.1.20-MariaDB MariaDB Server

Copyright (c) 2000, 2016, Oracle, MariaDB Corporation Ab and others.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

MariaDB [(none)]> show databases;

+--------------------+

| Database |

+--------------------+

| information_schema |

| keystone |

+--------------------+

2 rows in set (0.00 sec)

部署及配置keystone

运行以下命令来安装包。

# yum install openstack-keystone httpd mod_wsgi

编辑文件/etc/keystone/keystone.conf并完成如下动作:

生成临时 token

[root@vm1-controller1 ~]# openssl rand -hex 10

5d9fd0985867b7c5b173

修改配置文件:

17 admin_token = 5d9fd0985867b7c5b173

在[database]部分,配置数据库访问:

[database]

# ...

714 connection = mysql+pymysql://keystone:keystone123@vm2-haproxy-keep1.martin.com/keystone

在[token]部分,配置Fernet UUID令牌的提供者。

[token]

# ...

2833 provider = fernet

初始化身份认证服务的数据库并验证:

[root@vm1-controller1 ~]# su -s /bin/sh -c "keystone-manage db_sync" keystone

keystone日志文件:

[root@vm1-controller1 ~]# ll /var/log/keystone/keystone.log

-rw-rw---- 1 root keystone 12702 Jun 21 00:25 /var/log/keystone/keystone.log

初始化Fernet key:

[root@vm1-controller1 ~]# keystone-manage fernet_setup --keystone-user keystone --keystone-group keystone

[root@vm1-controller1 ~]# keystone-manage credential_setup --keystone-user keystone --keystone-group keystone

[root@vm1-controller1 ~]# ll /etc/keystone/fernet-keys/

total 8

-rw------- 1 keystone keystone 44 Jun 21 00:29 0

-rw------- 1 keystone keystone 44 Jun 21 00:29 1

配置 Apache HTTP 服务器

编辑/etc/httpd/conf/httpd.conf文件,配置ServerName选项为控制节点:

[root@vm1-controller1 ~]# vim /etc/httpd/conf/httpd.conf

95 ServerName 192.168.7.101:80

创建一个链接到/usr/share/keystone/wsgi-keystone.conf文件

[root@vm1-controller1 ~]# ln -s /usr/share/keystone/wsgi-keystone.conf /etc/httpd/conf.d/

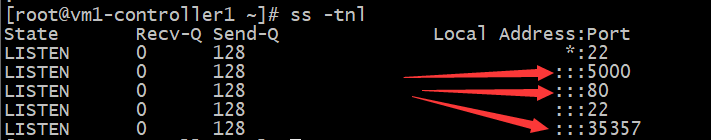

启动服务并设置开机自启:

[root@vm1-controller1 ~]# systemctl enable httpd && \

systemctl start httpd

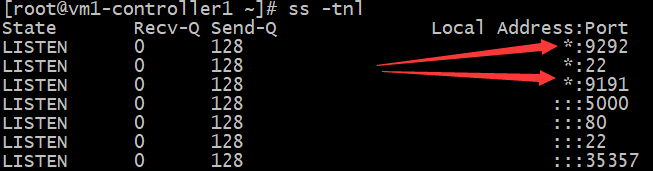

验证端口:

创建域,用户,项目和角色:

通过admin的token设置环境变量进行操作:

[root@vm1-controller1 ~]# mkdir scripts

[root@vm1-controller1 ~]# vim scripts/admin.sh

#!/bin/bash

export OS_TOKEN=5d9fd0985867b7c5b173

export OS_URL=http://192.168.7.101:35357/v3

export OS_IDENTITY_API_VERSION=3

测试是否有admin权限:

[root@vm1-controller1 ~]# openstack user list

Missing value auth-url required for auth plugin password

读取admin权限,无输出证明有admin权限。

[root@vm1-controller1 ~]# source scripts/admin.sh

[root@vm1-controller1 ~]# openstack user list

[null]...

创建默认域:

[root@vm1-controller1 ~]# openstack domain create \

--description "Default Domain" default

+-------------+----------------------------------+

| Field | Value |

+-------------+----------------------------------+

| description | Default Domain |

| enabled | True |

| id | fe1fb493f1f94807b1d2df002d52fcba |

| name | default |

+-------------+----------------------------------+

创建一个admin项目(每个人一个项目,隔离虚拟机):

[root@vm1-controller1 ~]# openstack project create --domain default \

--description "Admin Project" admin

+-------------+----------------------------------+

| Field | Value |

+-------------+----------------------------------+

| description | Admin Project |

| domain_id | fe1fb493f1f94807b1d2df002d52fcba |

| enabled | True |

| id | 604257738d254dd6a0dafc91c962b6bf |

| is_domain | False |

| name | admin |

| parent_id | fe1fb493f1f94807b1d2df002d52fcba |

+-------------+----------------------------------+

查看当前项目:

[root@vm1-controller1 ~]# openstack domain list

+----------------------------------+---------+---------+----------------+

| ID | Name | Enabled | Description |

+----------------------------------+---------+---------+----------------+

| fe1fb493f1f94807b1d2df002d52fcba | default | True | Default Domain |

+----------------------------------+---------+---------+----------------+

删除项目(just test):

[root@vm1-controller1 ~]# openstack domain delete fe1fb493f1f94807b1d2df002d52fcba

创建admin用户和admin角色:

一个项目里面可以有多个角色,目前角色只能创建在 /etc/keystone/policy.json 文件中定义好的角色:

[root@vm1-controller1 ~]# openstack user create --domain default \

--password-prompt admin

User Password:admin

Repeat User Password:admin

+---------------------+----------------------------------+

| Field | Value |

+---------------------+----------------------------------+

| domain_id | fe1fb493f1f94807b1d2df002d52fcba |

| enabled | True |

| id | 24fd631949c64206a88cb227af4b9863 |

| name | admin |

| options | {} |

| password_expires_at | None |

+---------------------+----------------------------------+

[root@vm1-controller1 ~]# openstack role create admin

+-----------+----------------------------------+

| Field | Value |

+-----------+----------------------------------+

| domain_id | None |

| id | db640d3073514cd8854b18749668eacc |

| name | admin |

+-----------+----------------------------------+

查看用户和角色列表:

[root@vm1-controller1 ~]# openstack user list

+----------------------------------+-------+

| ID | Name |

+----------------------------------+-------+

| 24fd631949c64206a88cb227af4b9863 | admin |

+----------------------------------+-------+

[root@vm1-controller1 ~]# openstack role list

+----------------------------------+-------+

| ID | Name |

+----------------------------------+-------+

| db640d3073514cd8854b18749668eacc | admin |

+----------------------------------+-------+

给admin账户授权:

将admin用户授予admin项目的admin角色,即给admin项目添加一个用户叫admin,并将其添加至admin角色,角色是权限的一种集合:

[root@vm1-controller1 ~]# openstack role add --project admin --user admin admin

[null]...

可以重复上述此过程来创建额外的项目和用户。

创建一个service项目(用于各服务之间与keystone进行访问和认证,service用于给服务创建账号):

[root@vm1-controller1 ~]# openstack project create --domain default \

--description "Service Project" service

+-------------+----------------------------------+

| Field | Value |

+-------------+----------------------------------+

| description | Service Project |

| domain_id | fe1fb493f1f94807b1d2df002d52fcba |

| enabled | True |

| id | 5a19f39e0c884e06965bfb130ea65338 |

| is_domain | False |

| name | service |

| parent_id | fe1fb493f1f94807b1d2df002d52fcba |

+-------------+----------------------------------+

创建一个demo项目(可用于演示或测试等):

[root@vm1-controller1 ~]# openstack project create --domain default \

--description "Demo Project" demo

+-------------+----------------------------------+

| Field | Value |

+-------------+----------------------------------+

| description | Demo Project |

| domain_id | fe1fb493f1f94807b1d2df002d52fcba |

| enabled | True |

| id | 4df85cc0d51848f6948f48f7baa09ce9 |

| is_domain | False |

| name | demo |

| parent_id | fe1fb493f1f94807b1d2df002d52fcba |

+-------------+----------------------------------+

创建demo用户和user角色:

[root@vm1-controller1 ~]# openstack user create --domain default \

--password-prompt demo

User Password:demo

Repeat User Password:demo

+---------------------+----------------------------------+

| Field | Value |

+---------------------+----------------------------------+

| domain_id | fe1fb493f1f94807b1d2df002d52fcba |

| enabled | True |

| id | 1a59e1ce8a0b4837894fc755caee129e |

| name | demo |

| options | {} |

| password_expires_at | None |

+---------------------+----------------------------------+

[root@vm1-controller1 ~]# openstack role create user

+-----------+----------------------------------+

| Field | Value |

+-----------+----------------------------------+

| domain_id | None |

| id | 82025a1f353846938519ef541ead1b1a |

| name | user |

+-----------+----------------------------------+

给demo账户授权:

将demo用户授予demo项目的user角色,即给demo项目添加一个用户叫demo,并将其添加至user角色,角色是权限的一种集合:

[root@vm1-controller1 ~]# openstack role add --project demo --user demo user

[null]...

服务注册:

将keystone服务地址注册到openstack,并查看当前服务

[root@vm1-controller1 ~]# openstack service create --name keystone \

--description "OpenStack Identity" identity

+-------------+----------------------------------+

| Field | Value |

+-------------+----------------------------------+

| description | OpenStack Identity |

| enabled | True |

| id | 59c196d754b344faa7198d3b14f805ce |

| name | keystone |

| type | identity |

+-------------+----------------------------------+

[root@vm1-controller1 ~]# openstack service list

+----------------------------------+----------+----------+

| ID | Name | Type |

+----------------------------------+----------+----------+

| 59c196d754b344faa7198d3b14f805ce | keystone | identity |

+----------------------------------+----------+----------+

配置haproxy

[Fri Jun 21 11:50

root@vm2-haproxy-keep1 ~]#vim /etc/haproxy/haproxy.cfg

listen keystone_public_url_5000

bind 192.168.7.248:5000

mode tcp

log global

balance source

server keystone1 192.168.7.101:5000 check inter 5000 rise 3 fall 3

listen keystone_admin_url_35357

bind 192.168.7.248:35357

mode tcp

log global

balance source

server keystone1 192.168.7.101:35357 check inter 5000 rise 3 fall 3

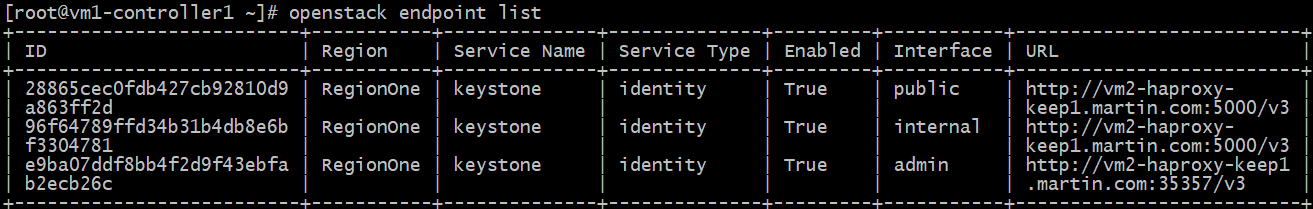

创建endpoint

如果创建错误或多创建了,就要全部删除再重新注册,因为你不知道哪一个是对的哪一个是错的,所以只能全部删除然后重新注册的IP地址写keepalived的VIP,稍后配置haproxy

[root@vm1-controller1 ~]# openstack endpoint create --region RegionOne identity \

public http://vm2-haproxy-keep1.martin.com:5000/v3

+--------------+---------------------------------------------+

| Field | Value |

+--------------+---------------------------------------------+

| enabled | True |

| id | 28865cec0fdb427cb92810d9a863ff2d |

| interface | public |

| region | RegionOne |

| region_id | RegionOne |

| service_id | 59c196d754b344faa7198d3b14f805ce |

| service_name | keystone |

| service_type | identity |

| url | http://vm2-haproxy-keep1.martin.com:5000/v3 |

+--------------+---------------------------------------------+

[root@vm1-controller1 ~]# openstack endpoint create --region RegionOne identity \

internal http://vm2-haproxy-keep1.martin.com:5000/v3

+--------------+---------------------------------------------+

| Field | Value |

+--------------+---------------------------------------------+

| enabled | True |

| id | 96f64789ffd34b31b4db8e6bf3304781 |

| interface | internal |

| region | RegionOne |

| region_id | RegionOne |

| service_id | 59c196d754b344faa7198d3b14f805ce |

| service_name | keystone |

| service_type | identity |

| url | http://vm2-haproxy-keep1.martin.com:5000/v3 |

+--------------+---------------------------------------------+

[root@vm1-controller1 ~]# openstack endpoint create --region RegionOne identity \

admin http://vm2-haproxy-keep1.martin.com:35357/v3

+--------------+----------------------------------------------+

| Field | Value |

+--------------+----------------------------------------------+

| enabled | True |

| id | e9ba07ddf8bb4f2d9f43ebfab2ecb26c |

| interface | admin |

| region | RegionOne |

| region_id | RegionOne |

| service_id | 59c196d754b344faa7198d3b14f805ce |

| service_name | keystone |

| service_type | identity |

| url | http://vm2-haproxy-keep1.martin.com:35357/v3 |

+--------------+----------------------------------------------+

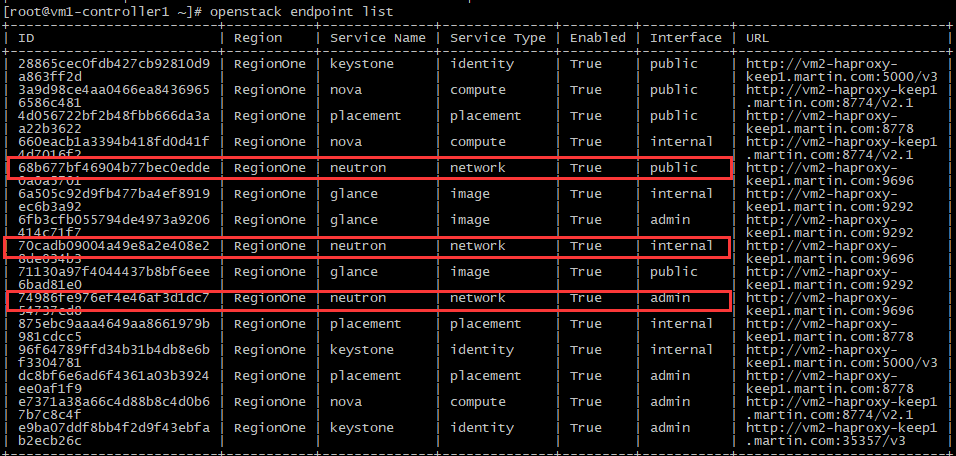

查看已注册节点:

[root@vm1-controller1 ~]# openstack endpoint list

验证联通性

[root@vm1-controller1 ~]# telnet vm2-haproxy-keep1.martin.com 5000

Trying 192.168.7.248...

Connected to vm2-haproxy-keep1.martin.com.

Escape character is '^]'.

[root@vm1-controller1 ~]# telnet vm2-haproxy-keep1.martin.com 35357

Trying 192.168.7.248...

Connected to vm2-haproxy-keep1.martin.com.

Escape character is '^]'.

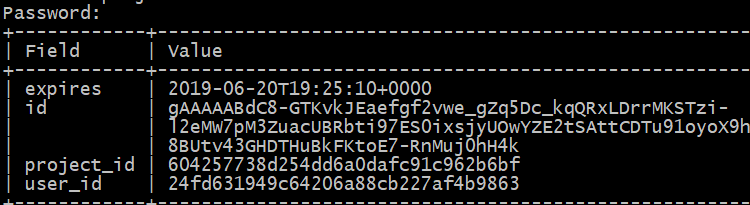

测试keystone是否可以做用户验证:新开终端测试

验证admin用户,密码admin,作为 admin 用户,请求认证令牌:。

export OS_IDENTITY_API_VERSION=3

[root@vm1-controller1 ~]# openstack --os-auth-url http://vm2-haproxy-keep1.martin.com:35357/v3 \

--os-project-domain-name default --os-user-domain-name default \

--os-project-name admin --os-username admin token issue

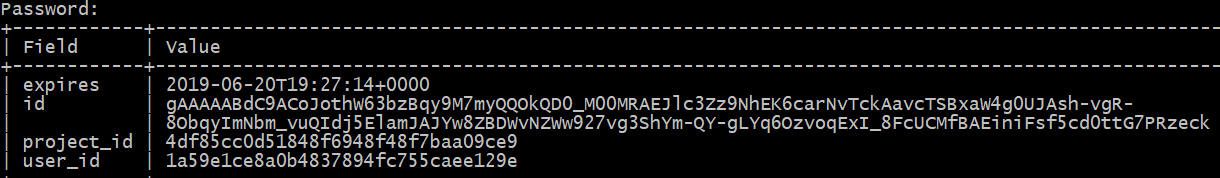

验证demo用户,密码demo,作为 demo 用户,请求认证令牌:。

export OS_IDENTITY_API_VERSION=3

[root@vm1-controller1 ~]# openstack --os-auth-url http://vm2-haproxy-keep1.martin.com:35357/v3 \

--os-project-domain-name default --os-user-domain-name default \

--os-project-name demo --os-username demo token issue

使用脚设置环境变量:

[root@vm1-controller1 ~]# cat scripts/admin.sh

#!/bin/bash

export OS_PROJECT_DOMAIN_NAME=default

export OS_USER_DOMAIN_NAME=default

export OS_PROJECT_NAME=admin

export OS_USERNAME=admin

export OS_PASSWORD=admin

export OS_AUTH_URL=http://vm2-haproxy-keep1.martin.com:35357/v3

export OS_IDENTITY_API_VERSION=3

export OS_IMAGE_API_VERSION=2

四:部署镜像服务glance

Glance是OpenStack镜像服务组件

- glance服务默认监听在9292端口,其接收REST API请求,然后通过其他模块(glance-registry及image store)来完成诸如镜像的获取、上传、删除等操作,

- Glance提供restful API可以查询虚拟机镜像的metadata,并且可以获得镜像,

- 通过Glance,虚拟机镜像可以被存储到多种存储上,比如简单的文件存储或者对象存储(比如OpenStack中swift项目)是在创建虚拟机的时候,需要先把镜像上传到glance,

- 对镜像的列出镜像、删除镜像和上传镜像都是通过glance进行管理,

- glance有两个主要的服务,一个是glace-api接收镜像的删除上传和读取,一个是glance-Registry。

- glance-registry

- 负责与mysql数据交互,用于存储或获取镜像的元数据(metadata),提供镜像元数据相关的REST接口,

- 通过glance-registry可以向数据库中写入或获取镜像的各种数据,

- glance-registyr监听的端口是9191,

- glance数据库中有两张表,一张是glance表,一张是imane property表,

- image表保存了镜像格式、大小等信息,

- image property表保存了镜的定制化信息。

- glance-registry

image store是一个存储的接口层

- 通过这个接口glance可以获取镜像,

- imagestore支持的存储有Amazon的S3、openstack本身的swift、还有ceph、glusterFS、sheepdog等分布式存储,

- image store是镜像保存与读取的接口,但是它只是一个接口,具体的实现需要外部的支持,

- glance不需要配置消息队列,但是需要配置数据库和keystone。

创建并初始化数据库:

在mysql服务器创建glance数据库并授权

MariaDB [(none)]> CREATE DATABASE glance;

MariaDB [(none)]> GRANT ALL PRIVILEGES ON glance.* TO 'glance'@'%' \

IDENTIFIED BY 'glance123';

验证数据库连接:

在控制端连接使用glance账户连接mysql。

[root@vm1-controller1 ~]# mysql -uglance -hvm2-haproxy-keep1.martin.com -pglance123

Welcome to the MariaDB monitor. Commands end with ; or \g.

Your MariaDB connection id is 42

Server version: 10.1.20-MariaDB MariaDB Server

Copyright (c) 2000, 2016, Oracle, MariaDB Corporation Ab and others.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

MariaDB [(none)]> show databases;

+--------------------+

| Database |

+--------------------+

| glance |

| information_schema |

+--------------------+

2 rows in set (0.00 sec)

创建glance用户和并授权admin角色:

[root@vm1-controller1 ~]# openstack user create --domain default \

--password-prompt glance

User Password:glance

Repeat User Password:glance

+---------------------+----------------------------------+

| Field | Value |

+---------------------+----------------------------------+

| domain_id | fe1fb493f1f94807b1d2df002d52fcba |

| enabled | True |

| id | a06b31c8892747d38f284eb31460a76c |

| name | glance |

| options | {} |

| password_expires_at | None |

+---------------------+----------------------------------+

给glance账户授权:

将glance用户授予service项目的admin角色,即给service项目添加一个用户叫glance,并将其添加至admin角色,角色是权限的一种集合:

[root@vm1-controller1 ~]# openstack role add --project service --user glance admin

[null]...

创建glance服务实体:

[root@vm1-controller1 ~]# openstack service create --name glance \

--description "OpenStack Image" image

+-------------+----------------------------------+

| Field | Value |

+-------------+----------------------------------+

| description | OpenStack Image |

| enabled | True |

| id | 9b466bc3fde646669007141e3e6cb4ca |

| name | glance |

| type | image |

+-------------+----------------------------------+

创建镜像服务的API端点:

[root@vm1-controller1 ~]# openstack endpoint create --region RegionOne \

image public http://vm2-haproxy-keep1.martin.com:9292

+--------------+------------------------------------------+

| Field | Value |

+--------------+------------------------------------------+

| enabled | True |

| id | 71130a97f4044437b8bf6eee6bad81e0 |

| interface | public |

| region | RegionOne |

| region_id | RegionOne |

| service_id | 9b466bc3fde646669007141e3e6cb4ca |

| service_name | glance |

| service_type | image |

| url | http://vm2-haproxy-keep1.martin.com:9292 |

+--------------+------------------------------------------+

[root@vm1-controller1 ~]# openstack endpoint create --region RegionOne \

image internal http://vm2-haproxy-keep1.martin.com:9292

+--------------+------------------------------------------+

| Field | Value |

+--------------+------------------------------------------+

| enabled | True |

| id | 6a505c92d9fb477ba4ef8919ec6b3a92 |

| interface | internal |

| region | RegionOne |

| region_id | RegionOne |

| service_id | 9b466bc3fde646669007141e3e6cb4ca |

| service_name | glance |

| service_type | image |

| url | http://vm2-haproxy-keep1.martin.com:9292 |

+--------------+------------------------------------------+

[root@vm1-controller1 ~]# openstack endpoint create --region RegionOne \

image admin http://vm2-haproxy-keep1.martin.com:9292

+--------------+------------------------------------------+

| Field | Value |

+--------------+------------------------------------------+

| enabled | True |

| id | 6fb3cfb055794de4973a9206414c71f7 |

| interface | admin |

| region | RegionOne |

| region_id | RegionOne |

| service_id | 9b466bc3fde646669007141e3e6cb4ca |

| service_name | glance |

| service_type | image |

| url | http://vm2-haproxy-keep1.martin.com:9292 |

+--------------+------------------------------------------+

配置haproxy代理glance

listen glance_api_9292

bind 192.168.7.248:9292

mode tcp

log global

balance source

server glance-api 192.168.7.101:9292 check inter 5000 rise 3 fall 3

listen glance_9191

bind 192.168.7.248:9191

mode tcp

log global

balance source

server glance 192.168.7.101:9191 check inter 5000 rise 3 fall 3

控制端安装glance

[root@vm1-controller1 ~]# yum install openstack-glance -y

编辑文件/etc/glance/glance-api.conf并完成如下动作:

在[database]部分,配置数据库访问:

[database]

# ...

1826 connection = mysql+pymysql://glance:glance123@vm2-haproxy-keep1.martin.com/glance

在[keystone_authtoken]和[paste_deploy]部分,配置认证服务访问:

3281 [keystone_authtoken]

# ...

auth_uri = http://vm2-haproxy-keep1.martin.com:5000

auth_url = http://vm2-haproxy-keep1.martin.com:35357

memcached_servers = vm2-haproxy-keep1.martin.com:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = glance

password = glance

[paste_deploy]

# ...

4268 flavor = keystone

在[glance_store]部分,配置本地文件系统存储和镜像文件位置:

后端如果有专门存储的服务器,挂载到此/var/lib/glance/images目录中,最后启动glance一系列服务

可使用NFS,rsync同步从服务器,

NFS端和使用端都要装nfs-util包,否则无法mount无法识别nfs。

挂载时可使用域名,提前做解析,

NFS存储宕机后,只需再DNS服务器将域名对应IP重新指向备份NFS即可。

NFS更改配置文件后,其支持热读配置文件,使用exportfs -r重读配置文件。

[glance_store]

# ...

1941 stores = file,http

1973 default_store = file

2292 filesystem_store_datadir = /var/lib/glance/images

验证/etc/glance/glance-api.conf配置内容:

[database]

connection = mysql+pymysql://glance:glance123@vm2-haproxy-keep1.martin.com/glance

[glance_store]

stores = file,http

default_store = file

filesystem_store_datadir = /var/lib/glance/images

[keystone_authtoken]

auth_uri = http://vm2-haproxy-keep1.martin.com:5000

auth_url = http://vm2-haproxy-keep1.martin.com:35357

memcached_servers = vm2-haproxy-keep1.martin.com:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = glance

password = glance

[paste_deploy]

flavor = keystone

编辑文件 /etc/glance/glance-registry.conf并完成如下动作:

在[database]部分,配置数据库访问:

[database]

# ...

1115 connection = mysql+pymysql://glance:glance123@vm2-haproxy-keep1.martin.com/glance

在[keystone_authtoken]和[paste_deploy]部分,配置认证服务访问:

1204 [keystone_authtoken]

# ...

auth_uri = http://vm2-haproxy-keep1.martin.com:5000

auth_url = http://vm2-haproxy-keep1.martin.com:35357

memcached_servers = vm2-haproxy-keep1.martin.com:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = glance

password = glance

[paste_deploy]

# ...

2162 flavor = keystone

验证/etc/glance/glance-registry.conf配置内容:

[database]

connection = mysql+pymysql://glance:glance123@vm2-haproxy-keep1.martin.com/glance

[keystone_authtoken]

auth_uri = http://vm2-haproxy-keep1.martin.com:5000

auth_url = http://vm2-haproxy-keep1.martin.com:35357

memcached_servers = vm2-haproxy-keep1.martin.com:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = glance

password = glance

[paste_deploy]

flavor = keystone

写入镜像服务数据库:

[root@vm1-controller1 ~]# su -s /bin/sh -c "glance-manage db_sync" glance

启动服务并设置开机自启:

[root@vm1-controller1 ~]# systemctl enable openstack-glance-api openstack-glance-registry && \

systemctl start openstack-glance-api openstack-glance-registry

验证glance端口:

验证服务可用性

下载源镜像并上传测试:

[root@vm1-controller1 ~]# wget http://download.cirros-cloud.net/0.3.5/cirros-0.3.5-x86_64-disk.img

[root@vm1-controller1 ~]# openstack image create "cirros" \

--file cirros-0.3.5-x86_64-disk.img \

--disk-format qcow2 --container-format bare \

--public

+------------------+-----------------------------------------------------

| Field | Value |

+------------------+------------------------------------------------------+

| checksum | f8ab98ff5e73ebab884d80c9dc9c7290 |

| container_format | bare |

| created_at | 2019-06-20T20:17:58Z |

| disk_format | qcow2 |

| file | /v2/images/e6b49b59-a598-4688-aa88-05c0b1eaacb2/file |

| id | e6b49b59-a598-4688-aa88-05c0b1eaacb2 |

| min_disk | 0 |

| min_ram | 0 |

| name | cirros |

| owner | 604257738d254dd6a0dafc91c962b6bf |

| protected | False |

| schema | /v2/schemas/image |

| size | 13267968 |

| status | active |

| tags | |

| updated_at | 2019-06-20T20:18:00Z |

| virtual_size | None |

| visibility | public |

+------------------+------------------------------------------------------+

确认镜像的上传并验证属性

[root@vm1-controller1 ~]# openstack image list

+--------------------------------------+--------+--------+

| ID | Name | Status |

+--------------------------------------+--------+--------+

| e6b49b59-a598-4688-aa88-05c0b1eaacb2 | cirros | active |

+--------------------------------------+--------+--------+

[root@vm1-controller1 ~]# ll /var/lib/glance/images/

total 12960

-rw-r----- 1 glance glance 13267968 Jun 21 04:18 e6b49b59-a598-4688-aa88-05c0b1eaacb2

查看指定镜像详细信息

[root@vm1-controller1 ~]# openstack image show cirros

+------------------+------------------------------------------------------+

| Field | Value |

+------------------+------------------------------------------------------+

| checksum | f8ab98ff5e73ebab884d80c9dc9c7290 |

| container_format | bare |

| created_at | 2019-06-20T20:17:58Z |

| disk_format | qcow2 |

| file | /v2/images/e6b49b59-a598-4688-aa88-05c0b1eaacb2/file |

| id | e6b49b59-a598-4688-aa88-05c0b1eaacb2 |

| min_disk | 0 |

| min_ram | 0 |

| name | cirros |

| owner | 604257738d254dd6a0dafc91c962b6bf |

| protected | False |

| schema | /v2/schemas/image |

| size | 13267968 |

| status | active |

| tags | |

| updated_at | 2019-06-20T20:18:00Z |

| virtual_size | None |

| visibility | public |

+------------------+------------------------------------------------------+

五:部署nova控制节点与计算节点

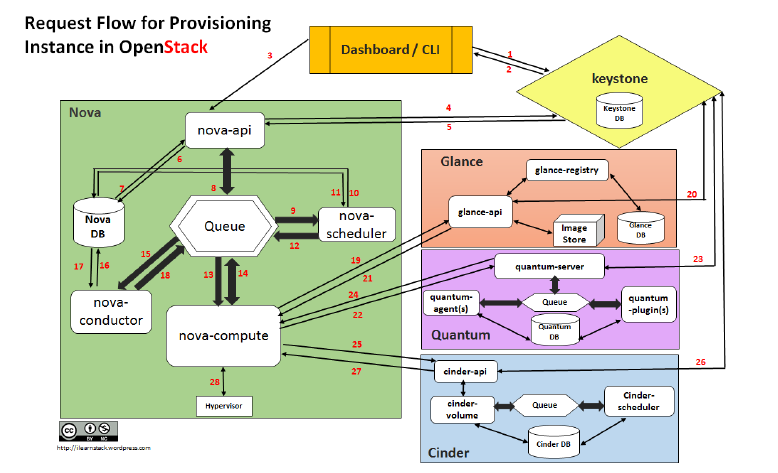

nova是openstack最早的组件之一,nova分为控制节点和计算节点,计算节点通过nova computer进行虚拟机创建,通过libvirt调用kvm创建虚拟机,nova之间通信通过rabbitMQ队列进行通信,其组件和功能如下:

API负责接收 和 响应外部请求 。

Scheduler负责 调度虚拟机所在的物理机。

Conductor :计算节点访问数据库的中间件。

Consoleauth:用于控制台的授权认证。

Novncproxy:VNC代理 ,用于显示虚拟机操作终端。

Nova-API的功能:

Nova-api 组件实现了restful API的功能, 接收和响应来自最终用户的计算API请求 ,接收外部的请求并通过message queue将请求发动给其他服务组件,同时也兼容EC2 API,所以也可以使用EC2的管理工具对nova进行日常管理。

nova scheduler

nova scheduler模块在openstack中的作用是决策虚拟机创建在哪个主机(计算节点)上。决策一个虚拟机应该调度到某物理节点,需要分为两个步骤:过滤(filter),过滤出可以创建虚拟机的主机。

安装并配置nova控制节点:

创建并初始化数据库:

在mysql服务器创建nova_api, nova和nova_cell0数据库并授权

MariaDB [(none)]> CREATE DATABASE nova_api;

MariaDB [(none)]> CREATE DATABASE nova;

MariaDB [(none)]> CREATE DATABASE nova_cell0;

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova_api.* TO 'nova'@'%' \

IDENTIFIED BY 'nova123';

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova.* TO 'nova'@'%' \

IDENTIFIED BY 'nova123';

MariaDB [(none)]> GRANT ALL PRIVILEGES ON nova_cell0.* TO 'nova'@'%' \

IDENTIFIED BY 'nova123';

验证数据库连接:

在控制端连接使用nova账户连接mysql。

[root@vm1-controller1 ~]# mysql -unova -hvm2-haproxy-keep1.martin.com -pnova123

Welcome to the MariaDB monitor. Commands end with ; or \g.

Your MariaDB connection id is 57

Server version: 10.1.20-MariaDB MariaDB Server

Copyright (c) 2000, 2016, Oracle, MariaDB Corporation Ab and others.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

MariaDB [(none)]> show databases;

+--------------------+

| Database |

+--------------------+

| information_schema |

| nova |

| nova_api |

| nova_cell0 |

+--------------------+

4 rows in set (0.00 sec)

配置haproxy代理glance

listen nova_8774

bind 192.168.7.248:8774

mode tcp

log global

balance source

server nova1 192.168.7.101:8774 check inter 5000 rise 3 fall 3

listen nova_8778

bind 192.168.7.248:8778

mode tcp

log global

balance source

server nova1 192.168.7.101:8778 check inter 5000 rise 3 fall 3

listen nova_vnc_6080

bind 192.168.7.248:6080

mode tcp

log global

balance source

server nova_vnc 192.168.7.101:6080 check inter 5000 rise 3 fall 3

创建nova用户和并授权admin角色:

[root@vm1-controller1 ~]# openstack user create --domain default \

--password-prompt nova

User Password:nova

Repeat User Password:nova

+---------------------+----------------------------------+

| Field | Value |

+---------------------+----------------------------------+

| domain_id | fe1fb493f1f94807b1d2df002d52fcba |

| enabled | True |

| id | 036551023231485690664d40266a5b60 |

| name | nova |

| options | {} |

| password_expires_at | None |

+---------------------+----------------------------------+

给nova账户授权:

将nova用户授予service项目的admin角色,即给service项目添加一个用户叫nova,并将其添加至admin角色,角色是权限的一种集合:

[root@vm1-controller1 ~]# openstack role add --project service --user nova admin

[null]...

创建nova服务实体:

[root@vm1-controller1 ~]# openstack service create --name nova \

--description "OpenStack Compute" compute

+-------------+----------------------------------+

| Field | Value |

+-------------+----------------------------------+

| description | OpenStack Compute |

| enabled | True |

| id | 6d7d57880933489e8fb7bcc587226d85 |

| name | nova |

| type | compute |

+-------------+----------------------------------+

创建nova服务的API端点:

[root@vm1-controller1 ~]# openstack endpoint create --region RegionOne \

compute public http://vm2-haproxy-keep1.martin.com:8774/v2.1

+--------------+-----------------------------------------------+

| Field | Value |

+--------------+-----------------------------------------------+

| enabled | True |

| id | 3a9d98ce4aa0466ea84369656586c481 |

| interface | public |

| region | RegionOne |

| region_id | RegionOne |

| service_id | 6d7d57880933489e8fb7bcc587226d85 |

| service_name | nova |

| service_type | compute |

| url | http://vm2-haproxy-keep1.martin.com:8774/v2.1 |

+--------------+-----------------------------------------------+

[root@vm1-controller1 ~]# openstack endpoint create --region RegionOne \

compute internal http://vm2-haproxy-keep1.martin.com:8774/v2.1

+--------------+-----------------------------------------------+

| Field | Value |

+--------------+-----------------------------------------------+

| enabled | True |

| id | 660eacb1a3394b418fd0d41f4d7016f2 |

| interface | internal |

| region | RegionOne |

| region_id | RegionOne |

| service_id | 6d7d57880933489e8fb7bcc587226d85 |

| service_name | nova |

| service_type | compute |

| url | http://vm2-haproxy-keep1.martin.com:8774/v2.1 |

+--------------+-----------------------------------------------+

[root@vm1-controller1 ~]# openstack endpoint create --region RegionOne \

compute admin http://vm2-haproxy-keep1.martin.com:8774/v2.1

+--------------+-----------------------------------------------+

| Field | Value |

+--------------+-----------------------------------------------+

| enabled | True |

| id | e7371a38a66c4d88b8c4d0b67b7c8c4f |

| interface | admin |

| region | RegionOne |

| region_id | RegionOne |

| service_id | 6d7d57880933489e8fb7bcc587226d85 |

| service_name | nova |

| service_type | compute |

| url | http://vm2-haproxy-keep1.martin.com:8774/v2.1 |

+--------------+-----------------------------------------------+

创建placement用户:

[root@vm1-controller1 ~]# openstack user create --domain default \

--password-prompt placement

User Password:placement

Repeat User Password:placement

+---------------------+----------------------------------+

| Field | Value |

+---------------------+----------------------------------+

| domain_id | fe1fb493f1f94807b1d2df002d52fcba |

| enabled | True |

| id | afcff33851fa434b8e26d4c7f6f74534 |

| name | placement |

| options | {} |

| password_expires_at | None |

+---------------------+----------------------------------+

给placement账户授权:

[root@vm1-controller1 ~]# openstack role add --project service --user placement admin

[null]...

创建placement服务实体:

[root@vm1-controller1 ~]# openstack service create --name placement \

--description "Placement API" placement

+-------------+----------------------------------+

| Field | Value |

+-------------+----------------------------------+

| description | Placement API |

| enabled | True |

| id | 68f3513b1e02482cb8325149c177c13a |

| name | placement |

| type | placement |

+-------------+----------------------------------+

创建Placement服务的API端点:

[root@vm1-controller1 ~]# openstack endpoint create --region RegionOne \

placement public http://vm2-haproxy-keep1.martin.com:8778

+--------------+------------------------------------------+

| Field | Value |

+--------------+------------------------------------------+

| enabled | True |

| id | 4d056722bf2b48fbb666da3aa22b3622 |

| interface | public |

| region | RegionOne |

| region_id | RegionOne |

| service_id | 68f3513b1e02482cb8325149c177c13a |

| service_name | placement |

| service_type | placement |

| url | http://vm2-haproxy-keep1.martin.com:8778 |

+--------------+------------------------------------------+

[root@vm1-controller1 ~]# openstack endpoint create --region RegionOne \

placement internal http://vm2-haproxy-keep1.martin.com:8778

+--------------+------------------------------------------+

| Field | Value |

+--------------+------------------------------------------+

| enabled | True |

| id | 875ebc9aaa4649aa8661979b981cdcc5 |

| interface | internal |

| region | RegionOne |

| region_id | RegionOne |

| service_id | 68f3513b1e02482cb8325149c177c13a |

| service_name | placement |

| service_type | placement |

| url | http://vm2-haproxy-keep1.martin.com:8778 |

+--------------+------------------------------------------+

[root@vm1-controller1 ~]# openstack endpoint create --region RegionOne \

placement admin http://vm2-haproxy-keep1.martin.com:8778

+--------------+------------------------------------------+

| Field | Value |

+--------------+------------------------------------------+

| enabled | True |

| id | dc8bf6e6ad6f4361a03b3924ee0af1f9 |

| interface | admin |

| region | RegionOne |

| region_id | RegionOne |

| service_id | 68f3513b1e02482cb8325149c177c13a |

| service_name | placement |

| service_type | placement |

| url | http://vm2-haproxy-keep1.martin.com:8778 |

+--------------+------------------------------------------+

控制端安装nova等包

[root@vm1-controller1 ~]# yum install openstack-nova-api openstack-nova-conductor \

openstack-nova-console openstack-nova-novncproxy \

openstack-nova-scheduler openstack-nova-placement-api

编辑文件/etc/nova/nova.conf并完成如下动作:

在[DEFAULT]部分,只启用计算和元数据API:

[DEFAULT]

# ...

2629 enabled_apis=osapi_compute,metadata

在[api_database]和[database]部分,配置数据库的连接:

[api_database]

# ...

3380 connection = mysql+pymysql://nova:nova123@vm2-haproxy-keep1.martin.com/nova_api

[database]

# ...

4397 connection = mysql+pymysql://nova:nova123@vm2-haproxy-keep1.martin.com/nova

在[DEFAULT]部分,配置RabbitMQ消息队列访问权限:

在[api_database]和[database]部分,配置数据库的连接:

[DEFAULT]

# ...

3021 transport_url=rabbit://openstack:123456@vm2-haproxy-keep1.martin.com

In the [api] and [keystone_authtoken] sections, configure Identity service access:

[api]

# ...

3085 auth_strategy=keystone

[keystone_authtoken]

# ...

auth_uri = http://vm2-haproxy-keep1.martin.com:5000

auth_url = http://vm2-haproxy-keep1.martin.com:35357

memcached_servers = vm2-haproxy-keep1.martin.com:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = nova

password = nova

在[DEFAULT]部分,配置my_ip来使用控制节点的管理接口的IP 地址。

1481 my_ip=192.168.7.101

在 [DEFAULT]部分,启用网络服务支持:

[DEFAULT]

# ...

2306 use_neutron=true

2465 firewall_driver=nova.virt.libvirt.firewall.IptablesFirewallDriver

在[vnc]部分,配置VNC代理使用控制节点的管理接口IP地址 :

9740 enabled=true

# ...

9763 vncserver_listen=$my_ip

9775 vncserver_proxyclient_address=$my_ip

在[glance]区域,配置镜像服务 API 的位置:

# ...

4955 api_servers = http://vm2-haproxy-keep1.martin.com:9292

在[oslo_concurrency]部分,配置锁路径:

[oslo_concurrency]

# ...

7313 lock_path=/var/lib/nova/tmp

In the [placement] section, configure the Placement API:

[placement]

# ...

os_region_name = RegionOne

project_domain_name = Default

project_name = service

auth_type = password

user_domain_name = Default

auth_url = http://vm2-haproxy-keep1.martin.com:35357/v3

username = placement

password = placement

打补丁,配置apache允许访问placement API,并重启服务systemctl restart httpd

Due to a packaging bug, you must enable access to the Placement API \

by adding the following configuration to /etc/httpd/conf.d/00-nova-placement-api.conf:

<Directory /usr/bin>

<IfVersion >= 2.4>

Require all granted

</IfVersion>

<IfVersion < 2.4>

Order allow,deny

Allow from all

</IfVersion>

</Directory>

过滤配置文件,防止写错

[root@vm1-controller1 ~]# grep -v "^#" /etc/nova/nova.conf | grep -v "^$"

[DEFAULT]

my_ip=192.168.7.101

use_neutron=true

firewall_driver=nova.virt.libvirt.firewall.IptablesFirewallDriver

enabled_apis=osapi_compute,metadata

transport_url=rabbit://openstack:123456@192.168.7.104

[api]

auth_strategy=keystone

[api_database]

connection = mysql+pymysql://nova:nova123@vm2-haproxy-keep1.martin.com/nova_api

[database]

connection = mysql+pymysql://nova:nova123@vm2-haproxy-keep1.martin.com/nova

[glance]

api_servers = http://vm2-haproxy-keep1.martin.com:9292

[keystone_authtoken]

auth_uri = http://vm2-haproxy-keep1.martin.com:5000

auth_url = http://vm2-haproxy-keep1.martin.com:35357

memcached_servers = vm2-haproxy-keep1.martin.com:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = nova

password = nova

[neutron]

url = http://vm2-haproxy-keep1.martin.com:9696

auth_url = http://vm2-haproxy-keep1.martin.com:35357

auth_type = password

project_domain_name = default

user_domain_name = default

region_name = RegionOne

project_name = service

username = neutron

password = neutron

service_metadata_proxy = true

metadata_proxy_shared_secret = 20190621

[oslo_concurrency]

lock_path=/var/lib/nova/tmp

[placement]

os_region_name = RegionOne

project_domain_name = Default

project_name = service

auth_type = password

user_domain_name = Default

auth_url = http://vm2-haproxy-keep1.martin.com:35357/v3

username = placement

password = placement

[vnc]

enabled=true

vncserver_listen=$my_ip

vncserver_proxyclient_address=$my_ip

初始化各数据库:

#nova_api数据库,#nova cell0数据库,#nova cell1数据库,#nova数据库

su -s /bin/sh -c "nova-manage api_db sync" nova

su -s /bin/sh -c "nova-manage cell_v2 map_cell0" nova

su -s /bin/sh -c "nova-manage cell_v2 create_cell --name=cell1 --verbose" nova

631a9d96-d98b-41d2-8b50-085e68bc529e

su -s /bin/sh -c "nova-manage db sync" nova

验证cell0和cell1是否正常注册:

[root@vm1-controller1 ~]# nova-manage cell_v2 list_cells

+-------+--------------------------------------+

| Name | UUID |

+-------+--------------------------------------+

| cell0 | 00000000-0000-0000-0000-000000000000 |

| cell1 | 631a9d96-d98b-41d2-8b50-085e68bc529e |

+-------+--------------------------------------+

启动 Compute 服务并将其设置为随系统启动:

[root@vm1-controller1 ~]# systemctl enable openstack-nova-api.service \

openstack-nova-consoleauth.service openstack-nova-scheduler.service \

openstack-nova-conductor.service openstack-nova-novncproxy.service

[root@vm1-controller1 ~]# systemctl start openstack-nova-api.service \

openstack-nova-consoleauth.service openstack-nova-scheduler.service \

openstack-nova-conductor.service openstack-nova-novncproxy.service

重启 nova 控制端脚本:

#!/bin/bash

systemctl restart openstack-nova-api.service openstack-nova-consoleauth.service openstack-nova-scheduler.service openst

ack-nova-conductor.service openstack-nova-novncproxy.service

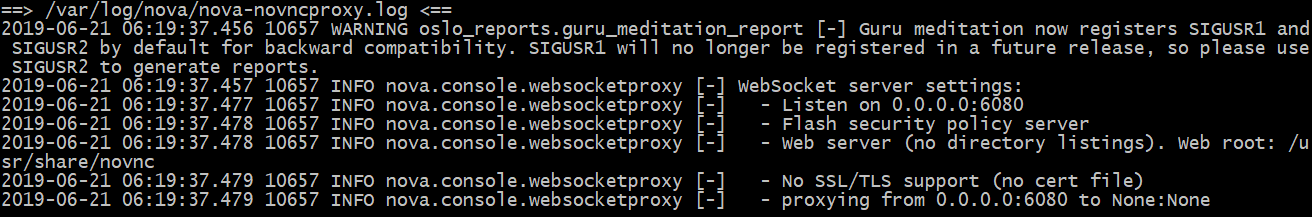

查看nova日志:

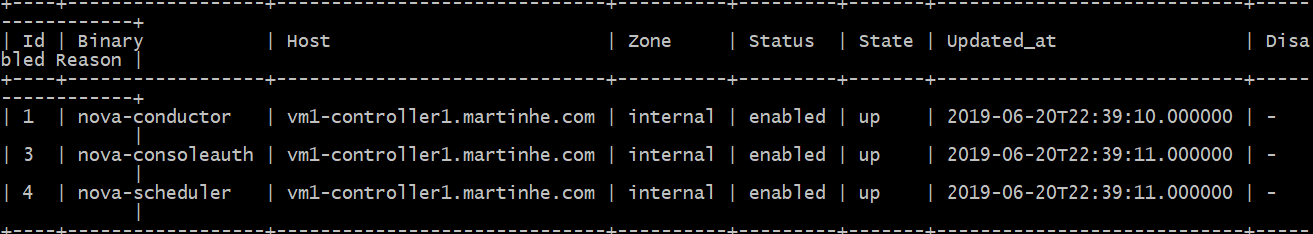

查看rabbitMQ连接:

验证nova控制端

[root@vm1-controller1 ~]# nova service-list

部署 nova 计算节点:

安装软件包:

[root@vm5-node1 ~]# yum install openstack-nova-compute -y

修改配置文件内容如下

[root@vm1-controller1 ~]# grep -v "^#" /etc/nova/nova.conf | grep -v "^$"

[DEFAULT]

my_ip=192.168.7.105

use_neutron=true

firewall_driver=nova.virt.libvirt.firewall.IptablesFirewallDriver

enabled_apis=osapi_compute,metadata

transport_url=rabbit://openstack:123456@192.168.7.104

rpc_backend=rabbit

[api]

auth_strategy=keystone

[api_database]

connection = mysql+pymysql://nova:nova123@vm2-haproxy-keep1.martin.com/nova_api

[database]

connection = mysql+pymysql://nova:nova123@vm2-haproxy-keep1.martin.com/nova

[glance]

api_servers = http://vm2-haproxy-keep1.martin.com:9292

[keystone_authtoken]

auth_uri = http://vm2-haproxy-keep1.martin.com:5000

auth_url = http://vm2-haproxy-keep1.martin.com:35357

memcached_servers = vm2-haproxy-keep1.martin.com:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = nova

password = nova

[libvirt]

virt_type=kvm

[neutron]

url = http://vm2-haproxy-keep1.martin.com:9696

auth_url = http://vm2-haproxy-keep1.martin.com:35357

auth_type = password

project_domain_name = default

user_domain_name = default

region_name = RegionOne

project_name = service

username = neutron

password = neutron

service_metadata_proxy = true

metadata_proxy_shared_secret = 20190621

[oslo_concurrency]

lock_path=/var/lib/nova/tmp

[placement]

os_region_name = RegionOne

project_domain_name = Default

project_name = service

auth_type = password

user_domain_name = Default

auth_url = http://vm2-haproxy-keep1.martin.com:35357/v3

username = placement

password = placement

[vnc]

enabled=true

vncserver_listen=0.0.0.0

vncserver_proxyclient_address=$my_ip

novncproxy_base_url=http://vm2-haproxy-keep1.martin.com:6080/vnc_auto.html

确认计算节点是否支持硬件加速:

[root@vm5-node1 ~]# egrep -c '(vmx|svm)' /proc/cpuinfo

1

在计算节点/etc/hosts里添加,haproxy VIP对应域名地址。

[root@vm5-node1 ~]# vim /etc/hosts

192.168.7.248 vm2-haproxy-keep1.martin.com

启动计算节点 Compute 服务并将其设置为随系统启动:

[root@vm5-node1 ~]# vim /etc/hosts

192.168.7.248 vm2-haproxy-keep1.martin.com

[root@vm5-node1 ~]# systemctl enable libvirtd.service openstack-nova-compute.service

[root@vm5-node1 ~]# systemctl start libvirtd.service openstack-nova-compute.service

添加计算节点到cell数据库

[root@vm1-controller1 ~]# openstack hypervisor list

+----+----------------------+-----------------+---------------+-------+

| ID | Hypervisor Hostname | Hypervisor Type | Host IP | State |

+----+----------------------+-----------------+---------------+-------+

| 1 | vm5-node1.martin.com | QEMU | 192.168.7.105 | up |

+----+----------------------+-----------------+---------------+-------+

如何发现计算节点

#使用命令发现

[root@vm1-controller1 ~]# su -s /bin/sh -c "nova-manage cell_v2 discover_hosts --verbose" nova

Found 2 cell mappings.

Skipping cell0 since it does not contain hosts.

Getting compute nodes from cell 'cell1': 631a9d96-d98b-41d2-8b50-085e68bc529e

Found 1 computes in cell: 631a9d96-d98b-41d2-8b50-085e68bc529e

Checking host mapping for compute host 'vm5-node1.martin.com': 91cd78f9-0cae-4c85-a26d-686345538c16

Creating host mapping for compute host 'vm5-node1.martin.com': 91cd78f9-0cae-4c85-a26d-686345538c16

#定期主动发现:

[root@vm1-controller1 ~]# vim /etc/nova/nova.conf

8748 #discover_hosts_in_cells_interval=300

#重启 nova 服务

[root@vm1-controller1 ~]# bash scripts/nova_restart.sh

验证计算节点:

[root@vm1-controller1 ~]# nova host-list

+------------------------------+-------------+----------+

| host_name | service | zone |

+------------------------------+-------------+----------+

| vm1-controller1.martinhe.com | conductor | internal |

| vm1-controller1.martinhe.com | consoleauth | internal |

| vm1-controller1.martinhe.com | scheduler | internal |

| vm5-node1.martin.com | compute | nova |

+------------------------------+-------------+----------+

+----+------------------+------------------------------+----------+---------+-------+----------------------------+-----------------+

| Id | Binary | Host | Zone | Status | State | Updated_at | Disabled Reason |

+----+------------------+------------------------------+----------+---------+-------+----------------------------+-----------------+

| 1 | nova-conductor | vm1-controller1.martinhe.com | internal | enabled | up | 2019-06-20T23:13:51.000000 | - |

| 3 | nova-consoleauth | vm1-controller1.martinhe.com | internal | enabled | up | 2019-06-20T23:13:52.000000 | - |

| 4 | nova-scheduler | vm1-controller1.martinhe.com | internal | enabled | up | 2019-06-20T23:13:52.000000 | - |

| 7 | nova-compute | vm5-node1.martin.com | nova | enabled | up | 2019-06-20T23:13:49.000000 | - |

+----+------------------+------------------------------+----------+---------+-------+----------------------------+-----------------+

[root@vm1-controller1 ~]# nova image-list

WARNING: Command image-list is deprecated and will be removed after Nova 15.0.0 is released. Use python-glanceclient or openstackclient instead

+--------------------------------------+--------+--------+--------+

| ID | Name | Status | Server |

+--------------------------------------+--------+--------+--------+

| e6b49b59-a598-4688-aa88-05c0b1eaacb2 | cirros | ACTIVE | |

+--------------------------------------+--------+--------+--------+

[root@vm1-controller1 ~]# openstack image list

+--------------------------------------+--------+--------+

| ID | Name | Status |

+--------------------------------------+--------+--------+

| e6b49b59-a598-4688-aa88-05c0b1eaacb2 | cirros | active |

+--------------------------------------+--------+--------+

[root@vm1-controller1 ~]# openstack compute service list

#列出服务组件是否成功注册

+----+------------------+------------------------------+----------+---------+-------+----------------------------+

| ID | Binary | Host | Zone | Status | State | Updated At |

+----+------------------+------------------------------+----------+---------+-------+----------------------------+

| 1 | nova-conductor | vm1-controller1.martinhe.com | internal | enabled | up | 2019-06-20T23:11:41.000000 |

| 3 | nova-consoleauth | vm1-controller1.martinhe.com | internal | enabled | up | 2019-06-20T23:11:42.000000 |

| 4 | nova-scheduler | vm1-controller1.martinhe.com | internal | enabled | up | 2019-06-20T23:11:42.000000 |

| 7 | nova-compute | vm5-node1.martin.com | nova | enabled | up | 2019-06-20T23:11:39.000000 |

+----+------------------+------------------------------+----------+---------+-------+----------------------------+

[root@vm1-controller1 ~]# nova-status upgrade check

#列出工作进程,API,计算节点是否正常

+---------------------------+

| Upgrade Check Results |

+---------------------------+

| Check: Cells v2 |

| Result: Success |

| Details: None |

+---------------------------+

| Check: Placement API |

| Result: Success |

| Details: None |

+---------------------------+

| Check: Resource Providers |

| Result: Success |

| Details: None |

+---------------------------+

六:部署网络服务 neutron

neutron是openstack的网络组件,是OpenStack的网络服务,Openstack在2010年正式发布它的第一个版本Austin的时候,novanetwork作为它的核心组件被包含其中因为商标侵权的原因,Openstack在Havana版本上将Quantum美国昆腾公司昆腾公司的硬盘驱动器业务于2000年4月2日被迈拓(公司收购,随后迈拓于2005年被希捷(收购更名为Neutron,以下是网络的简单介绍:

网络:在显示的网络环境中我们使用交换机将多个计算机连接起来从而形成了网络,而在neutron的环境里,网络的功能也是将多个不同的云主机连接起来。

子网:是现实的网络环境下可以将一个网络划分成多个逻辑上的子网络从而实现网络隔离在neutron里面子网也是属于网络。

端口:计算机连接交换机通过网线连而网线插在交换机的不同端口在neutron里面端口属于子网,即每个云主机的子网都会对应到一个端口。

路由器:用于连接不通的网络或者子网。

网络类型:

提供者网络:虚拟机桥接到物理机,并且虚拟机必须和物理机在同一个网络范围内。

自服务网络:可以自己创建网络,最终会通过虚拟路由器连接外网

安装并配置neutron控制节点:

创建并初始化数据库:

在mysql服务器创建neutron数据库并授权

MariaDB [(none)]> CREATE DATABASE neutron;

MariaDB [(none)]> GRANT ALL PRIVILEGES ON neutron.* TO 'neutron'@'%' \

IDENTIFIED BY 'neutron123';

验证数据库连接:

在控制端连接使用nova账户连接mysql。

[root@vm1-controller1 ~]# mysql -uneutron -hvm2-haproxy-keep1.martin.com -pneutron123

Welcome to the MariaDB monitor. Commands end with ; or \g.

Your MariaDB connection id is 146

Server version: 10.1.20-MariaDB MariaDB Server

Copyright (c) 2000, 2016, Oracle, MariaDB Corporation Ab and others.

Type 'help;' or '\h' for help. Type '\c' to clear the current input statement.

MariaDB [(none)]> show databases;

+--------------------+

| Database |

+--------------------+

| information_schema |

| neutron |

+--------------------+

2 rows in set (0.00 sec)

创建neutron用户和并授权admin角色:

[root@vm1-controller1 ~]# openstack user create --domain default \

--password-prompt neutron

User Password:neutron

Repeat User Password:neutron

+---------------------+----------------------------------+

| Field | Value |

+---------------------+----------------------------------+

| domain_id | fe1fb493f1f94807b1d2df002d52fcba |

| enabled | True |

| id | 90c4caa000084addbf122ca7f1270e6b |

| name | neutron |

| options | {} |

| password_expires_at | None |

+---------------------+----------------------------------+

给neutron账户授权:

[root@vm1-controller1 ~]# openstack role add --project service --user neutron admin

[null]...

创建neutron服务实体:

[root@vm1-controller1 ~]# openstack service create --name neutron \

--description "OpenStack Networking" network

+-------------+----------------------------------+

| Field | Value |

+-------------+----------------------------------+

| description | OpenStack Networking |

| enabled | True |

| id | e9dba693185b49e5b41aa279cfe7419d |

| name | neutron |

| type | network |

+-------------+----------------------------------+

创建网络服务的API端点:

[root@vm1-controller1 ~]# openstack endpoint create --region RegionOne \

network public http://vm2-haproxy-keep1.martin.com:9696

+--------------+------------------------------------------+

| Field | Value |

+--------------+------------------------------------------+

| enabled | True |

| id | 68b677bf46904b77bec0edde0a0a5701 |

| interface | public |

| region | RegionOne |

| region_id | RegionOne |

| service_id | e9dba693185b49e5b41aa279cfe7419d |

| service_name | neutron |

| service_type | network |

| url | http://vm2-haproxy-keep1.martin.com:9696 |

+--------------+------------------------------------------+

[root@vm1-controller1 ~]# openstack endpoint create --region RegionOne \

network internal http://vm2-haproxy-keep1.martin.com:9696

+--------------+------------------------------------------+

| Field | Value |

+--------------+------------------------------------------+

| enabled | True |

| id | 70cadb09004a49e8a2e408e28de034b3 |

| interface | internal |

| region | RegionOne |

| region_id | RegionOne |

| service_id | e9dba693185b49e5b41aa279cfe7419d |

| service_name | neutron |

| service_type | network |

| url | http://vm2-haproxy-keep1.martin.com:9696 |

+--------------+------------------------------------------+

[root@vm1-controller1 ~]# openstack endpoint create --region RegionOne \

network admin http://vm2-haproxy-keep1.martin.com:9696

+--------------+------------------------------------------+

| Field | Value |

+--------------+------------------------------------------+

| enabled | True |

| id | 74986fe976ef4e46af3d1dc754737ed8 |

| interface | admin |

| region | RegionOne |

| region_id | RegionOne |

| service_id | e9dba693185b49e5b41aa279cfe7419d |

| service_name | neutron |

| service_type | network |

| url | http://vm2-haproxy-keep1.martin.com:9696 |

+--------------+------------------------------------------+

验证端点添加成功:

配置haproxy负载:

listen neutron_9696

bind 192.168.7.248:9696

mode tcp

log global

balance source

server nova_vnc 192.168.7.101:9696 check inter 5000 rise 3 fall 3

部署neutron控制端

控制端安装neutron

[root@vm1-controller1 ~]# yum install openstack-neutron openstack-neutron-ml2 \

openstack-neutron-linuxbridge ebtables

网络选项1:提供者网络(桥接),配置如下

网络选项2:自服务网络(NAT),参照Openstack Ocata版安装文档(二):配置自服务-网络选项2:自服务网络(NAT)

编辑neutron配置文件:

编辑文件/etc/neutron/neutron.conf并完成如下动作:

在[database]部分,配置数据库的连接:

[database]

# ...

765 connection = mysql+pymysql://neutron:neutron123@vm2-haproxy-keep1.martin.com/neutron

在[DEFAULT]部分,启用ML2插件并禁用其他插件:

[DEFAULT]

# ...

30 core_plugin = ml2

33 service_plugins =

在[DEFAULT]部分,配置RabbitMQ消息队列访问权限:

[DEFAULT]

# ...

570 transport_url = rabbit://openstack:123456@vm2-haproxy-keep1.martin.com

rpc_backend = rabbit

在 “[DEFAULT]” 和 “[keystone_authtoken]” 部分,配置认证服务访问:

[DEFAULT]

# ...

27 auth_strategy = keystone

[keystone_authtoken]

# ...

auth_uri = http://vm2-haproxy-keep1.martin.com:5000

auth_url = http://vm2-haproxy-keep1.martin.com:35357

memcached_servers = vm2-haproxy-keep1.martin.com:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = neutron

password = neutron

在[DEFAULT]和[nova]部分,配置网络服务来通知计算节点的网络拓扑变化:

[DEFAULT]

99 notify_nova_on_port_status_changes = true

103 notify_nova_on_port_data_changes = true

[nova]

# ...

auth_url = http://vm2-haproxy-keep1.martin.com:35357

auth_type = password

project_domain_name = default

user_domain_name = default

region_name = RegionOne

project_name = service

username = nova

password = nova

在[oslo_concurrency]部分,配置锁路径:

[oslo_concurrency]

# ...

1199 lock_path = /var/lib/neutron/tmp

验证/etc/neutron/neutron.conf配置内容:

[DEFAULT]

auth_strategy = keystone

core_plugin = ml2

service_plugins =

notify_nova_on_port_status_changes = true

notify_nova_on_port_data_changes = true

transport_url = rabbit://openstack:123456@192.168.7.104

rpc_backend = rabbit

[database]

connection = mysql+pymysql://neutron:neutron123@vm2-haproxy-keep1.martin.com/neutron

[keystone_authtoken]

auth_uri = http://vm2-haproxy-keep1.martin.com:5000

auth_url = http://vm2-haproxy-keep1.martin.com:35357

memcached_servers = vm2-haproxy-keep1.martin.com:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = neutron

password = neutron

[nova]

auth_url = http://vm2-haproxy-keep1.martin.com:35357

auth_type = password

project_domain_name = default

user_domain_name = default

region_name = RegionOne

project_name = service

username = nova

password = nova

[oslo_concurrency]

lock_path = /var/lib/neutron/tmp

配置 Modular Layer 2 (ML2) 插件

ML2插件使用Linuxbridge机制来为实例创建layer-2虚拟网络基础设施

编辑/etc/neutron/plugins/ml2/ml2_conf.ini文件并完成以下操作:

在[ml2]部分,

[ml2]

启用flat和VLAN网络:

122 type_drivers = flat,vlanp

禁用私有网络:

128 tenant_network_types =

启用Linuxbridge机制:

133 mechanism_drivers = linuxbridge

启用端口安全扩展驱动:

139 extension_drivers = port_security

在[ml2_type_flat]部分,配置公共虚拟网络为flat网络:

# ...

[ml2_type_flat]

176 flat_networks = provider

在 [securitygroup]部分,启用 ipset 增加安全组的方便性:

[securitygroup]

# ...

252 enable_ipset = true

验证/etc/neutron/plugins/ml2/ml2_conf.ini配置内容:

[ml2]

type_drivers = flat,vlanp

tenant_network_types =

mechanism_drivers = linuxbridge

extension_drivers = port_security

[ml2_type_flat]

flat_networks = internal,external #此项针对内外网名称区分用途

[securitygroup]

enable_ipset = true

配置Linuxbridge代理

编辑/etc/neutron/plugins/ml2/linuxbridge_agent.ini文件并且完成以下操作:

在[linux_bridge]部分,将公共虚拟网络和公共物理网络接口对应起来:

[linux_bridge]

156 physical_interface_mappings = provider:br1

在[vxlan]部分,禁止VXLAN覆盖网络:

# ...

[vxlan]

189 enable_vxlan = false

在 [securitygroup]部分,启用安全组并配置 Linux 桥接 iptables 防火墙驱动:

[securitygroup]

# ...

enable_security_group = true

firewall_driver = neutron.agent.linux.iptables_firewall.IptablesFirewallDriver

验证/etc/neutron/plugins/ml2/linuxbridge_agent.ini配置内容:

[linux_bridge]

physical_interface_mappings = internal:eth0,external:eth2

[securitygroup]

firewall_driver = neutron.agent.linux.iptables_firewall.IptablesFirewallDriver

enable_security_group = true

[vxlan]

enable_vxlan = false

配置DHCP代理

编辑/etc/neutron/dhcp_agent.ini文件并完成下面的操作:

在[DEFAULT]部分,配置Linuxbridge驱动接口,DHCP驱动并启用隔离元数据,这样在公共网络上的实例就可以通过网络来访问元数据

[DEFAULT]

# ...

16 interface_driver = linuxbridge

32 dhcp_driver = neutron.agent.linux.dhcp.Dnsmasq

41 enable_isolated_metadata = true

配置元数据代理

编辑/etc/neutron/metadata_agent.ini文件并完成以下操作:

在[DEFAULT]部分,配置元数据主机以及共享密码:

[DEFAULT]

# ...

22 nova_metadata_ip = 192.168.7.101

34 metadata_proxy_shared_secret=20190621

配置计算服务来使用网络服务

编辑/etc/nova/nova.conf文件并完成以下操作:

在[neutron]部分,配置访问参数,启用元数据代理并设置密码:

[neutron]

url = http://vm2-haproxy-keep1.martin.com:9696

auth_url = http://vm2-haproxy-keep1.martin.com:35357

auth_type = password

project_domain_name = default

user_domain_name = default

region_name = RegionOne

project_name = service

username = neutron

password = neutron

service_metadata_proxy = true

metadata_proxy_shared_secret = 20190621

完成安装

网络服务初始化脚本需要一个超链接 /etc/neutron/plugin.ini指向ML2插件配置文件/etc/neutron/plugins/ml2/ml2_conf.ini如果超链接不存在,使用下面的命令创建它:

[root@vm1-controller1 ~]# ln -s /etc/neutron/plugins/ml2/ml2_conf.ini /etc/neutron/plugin.ini

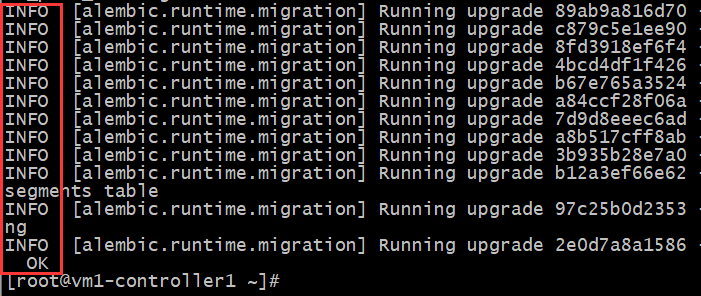

初始化数据库:

数据库的同步发生在 Networking 之后,因为脚本需要完成服务器和插件的配置文件。如果同步不成功,则先执行后续启动neutron等一系列服务。

[root@vm1-controller1 ~]# su -s /bin/sh -c "neutron-db-manage --config-file /etc/neutron/neutron.conf \

--config-file /etc/neutron/plugins/ml2/ml2_conf.ini upgrade head" neutron

启 nova API 服务:

[root@vm5-node1 ~]# systemctl restart openstack-nova-api.service

当系统启动时,启动 Networking 服务并配置它启动。

对于两者网络选项:

[root@vm1-controller1 ~]# systemctl enable neutron-server.service \

neutron-linuxbridge-agent.service neutron-dhcp-agent.service \

neutron-metadata-agent.service

[root@vm1-controller1 ~]# systemctl start neutron-server.service \

neutron-linuxbridge-agent.service neutron-dhcp-agent.service \

neutron-metadata-agent.service

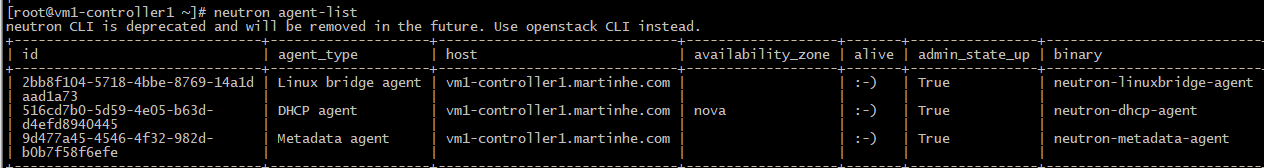

验证neutron控制端是否注册成功:

[root@vm1-controller1 ~]# neutron agent-list

neutron控制端重启脚本:

[root@vm1-controller1 ~]# vim scripts/neutron_restart.sh

#!/bin/bash

systemctl restart neutron-server.service neutron-linuxbridge-agent.service neutron-dhcp-agent.service neutron-metadata-agent.service openstack-nova-api.service

对于网络选项2(自服务网络),同样启用layer-3服务并设置其随系统自启动

# systemctl enable neutron-l3-agent.service

# systemctl start neutron-l3-agent.servic

安装和配置计算节点

安装组件

# yum install openstack-neutron-linuxbridge ebtables ipset

编辑/etc/neutron/neutron.conf文件并完成如下操作:

在[database] 部分,注释所有connection 项,因为计算节点不直接访问数据库。

在[DEFAULT]部分,配置RabbitMQ消息队列访问权限:

[DEFAULT]

# ...

transport_url = rabbit://openstack:123456@192.168.7.104

rpc_backend = rabbit

在 “[DEFAULT]” 和 “[keystone_authtoken]” 部分,配置认证服务访问:

[DEFAULT]

# ...

auth_strategy = keystone

[keystone_authtoken]

# ...

auth_uri = http://vm2-haproxy-keep1.martin.com:5000

auth_url = http://vm2-haproxy-keep1.martin.com:35357

memcached_servers = vm2-haproxy-keep1.martin.com:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = neutron

password = neutron

在 [oslo_concurrency] 部分,配置锁路径:

[oslo_concurrency]

# ...

lock_path = /var/lib/neutron/tmp

验证/etc/neutron/neutron.conf配置内容:

[DEFAULT]

auth_strategy = keystone

transport_url = rabbit://openstack:123456@192.168.7.104

rpc_backend = rabbit

[keystone_authtoken]

auth_uri = http://vm2-haproxy-keep1.martin.com:5000

auth_url = http://vm2-haproxy-keep1.martin.com:35357

memcached_servers = vm2-haproxy-keep1.martin.com:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = neutron

password = neutron

[oslo_concurrency]

lock_path = /var/lib/neutron/tmp

配置提供者-网络选项1:提供者网络(桥接)

配置Linuxbridge代理

Linuxbridge代理为实例建立layer-2虚拟网络并且处理安全组规则。

编辑/etc/neutron/plugins/ml2/linuxbridge_agent.ini文件并且完成以下操作:

在[linux_bridge]部分,将公共虚拟网络和公共物理网络接口对应起来:

[linux_bridge]

physical_interface_mappings = provider:br1

在[vxlan]部分,禁止VXLAN覆盖网络:

[vxlan]

enable_vxlan = false

在 [securitygroup]部分,启用安全组并配置 Linux 桥接 iptables 防火墙驱动:

[securitygroup]

# ...

enable_security_group = true

firewall_driver = neutron.agent.linux.iptables_firewall.IptablesFirewallDriver

验证/etc/neutron/plugins/ml2/linuxbridge_agent.ini配置内容:

[linux_bridge]

physical_interface_mappings = internal:eth0,external:eth2

[securitygroup]

firewall_driver = neutron.agent.linux.iptables_firewall.IptablesFirewallDriver

enable_security_group = true

[vxlan]

enable_vxlan = false

配置计算服务来使用网络服务

编辑/etc/nova/nova.conf文件并完成以下操作:

在[neutron]部分,配置访问参数,启用元数据代理并设置密码

[neutron]

url = http://vm2-haproxy-keep1.martin.com:9696

auth_url = http://vm2-haproxy-keep1.martin.com:35357

auth_type = password

project_domain_name = default

user_domain_name = default

region_name = RegionOne

project_name = service

username = neutron

password = neutron

service_metadata_proxy = true

metadata_proxy_shared_secret = 20190621

完成安装

重启计算服务:

# systemctl restart openstack-nova-compute.service

启动Linuxbridge代理并配置它开机自启动:

# systemctl enable neutron-linuxbridge-agent.service

# systemctl start neutron-linuxbridge-agent.service

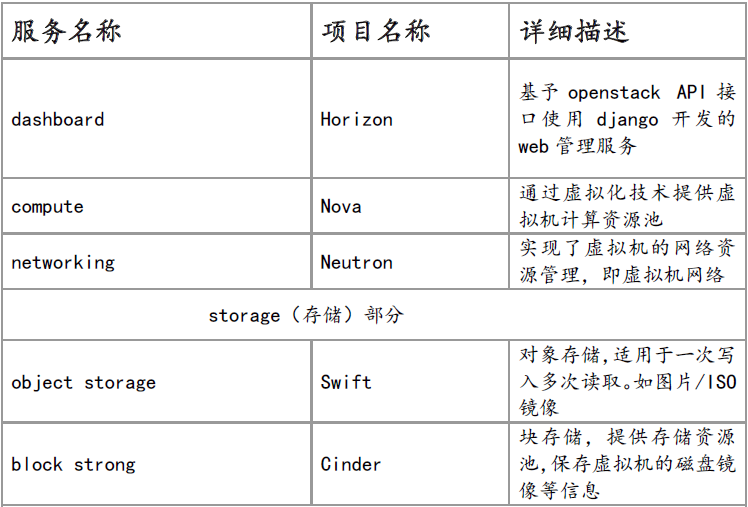

部署管理服务horizon

horizon是 openstack 的管理其他组件的图形显示和操作界面,通过 API 和其他服务进行通讯 如镜像服务、计算服务和网络服务等结合使用 horizon 基于 python django 开发,通Apache 的 wsgi 模块进行 web 访问通信, Horizon 只需要更改配置文件连接到 keyston 即可,过程如下:

控制端安装horizon

[root@vm1-controller1 ~]# yum install openstack-dashboard -y

编辑文件/etc/openstack-dashboard/local_settings并完成如下动作:

[root@vm1-controller1 ~]# vim /etc/openstack-dashboard/local_settings

159 OPENSTACK_HOST = "192.168.7.248"

28 ALLOWED_HOSTS = ['*',]

配置 memcached 会话存储服务:

新添加

129 SESSION_ENGINE = 'django.contrib.sessions.backends.cache'

CACHES = {

'default': {

'BACKEND': 'django.core.cache.backends.memcached.MemcachedCache',

'LOCATION': '192.168.7.248:11211',

},

}

启用第3版认证API:

162 OPENSTACK_KEYSTONE_URL = "http://%s:5000/v3" % OPENSTACK_HOST

启用对域的支持

65 OPENSTACK_KEYSTONE_MULTIDOMAIN_SUPPORT = True

配置API版本:

54 OPENSTACK_API_VERSIONS = {

"identity": 3,

"image": 2,

"volume": 2,

}

configure Default as the default domain for users that you create via the dashboard:

73 OPENSTACK_KEYSTONE_DEFAULT_DOMAIN = 'Default'

通过仪表盘创建的用户默认角色配置为 user :

163 OPENSTACK_KEYSTONE_DEFAULT_ROLE = "user"

选择网络参数1,禁用支持3层网络服务:

283 OPENSTACK_NEUTRON_NETWORK = {

...

'enable_router': False,

'enable_quotas': False,

'enable_distributed_router': False,

'enable_ha_router': False,

'enable_lb': False,

'enable_firewall': False,

'enable_vpn': False,

'enable_fip_topology_check': False,

}

选择网络参数2,开启支持3层网络服务:(即网络拓扑等选项可显示)

283 OPENSTACK_NEUTRON_NETWORK = {

...

'enable_router': True,

'enable_quotas': True,

'enable_distributed_router': True,

'enable_ha_router': True,

'enable_lb': True,

'enable_firewall': True,

'enable_vpn': True,

'enable_fip_topology_check': True,

}

可以选择性地配置时区:

418 TIME_ZONE = "Asia/Shanghai"

验证/etc/neutron/plugins/ml2/linuxbridge_agent.ini配置内容:

ALLOWED_HOSTS = ['*',]

OPENSTACK_API_VERSIONS = {

"identity": 3,

"image": 2,

"volume": 2,

}

OPENSTACK_KEYSTONE_MULTIDOMAIN_SUPPORT = True

OPENSTACK_KEYSTONE_DEFAULT_DOMAIN = 'Default'

SESSION_ENGINE = 'django.contrib.sessions.backends.cache'

CACHES = {

'default': {

'BACKEND': 'django.core.cache.backends.memcached.MemcachedCache',

'LOCATION': '192.168.7.248:11211',

},

}

OPENSTACK_NEUTRON_NETWORK = {

'enable_router': False,

'enable_quotas': False,

'enable_ipv6': False,

'enable_distributed_router': False,

'enable_ha_router': False,

'enable_lb': False,

'enable_firewall': False,

'enable_vpn': False,

'enable_fip_topology_check': False,

#...

}

TIME_ZONE = "Asia/Shanghai"

重启apache服务和memcached服务

systemctl restart httpd.service

systemctl restart memcached.service

启动一个实例:

创建虚拟网络

创建提供者网络(externel)

创建网络:

首先修改/etc/neutron/plugins/ml2/linuxbridge_agent.ini配置文件,添加内外网提供网卡,内容如下:

[root@vm1-controller1 ~]# vim /etc/neutron/plugins/ml2/linuxbridge_agent.ini

physical_interface_mappings = internal:eth0,external:eth2

修改/etc/neutron/plugins/ml2/ml2_conf.ini配置文件,添加内外网名称,内容如下:

[root@vm1-controller1 ~]# vim /etc/neutron/plugins/ml2/ml2_conf.ini

[ml2_type_flat]

flat_networks = internal,external

重启neutron各服务:

[root@vm1-controller1 ~]# bash scripts/neutron_restart.sh

创建网络:桥接和仅主机模式

创建声明为share的external网络,提供服务的物理网卡名称为external,网络类型是flat的网络名称为external-net的网络。

[root@vm1-controller1 ~]# openstack network create --share --external \

--provider-physical-network external \

--provider-network-type flat external-net

+---------------------------+--------------------------------------+

| Field | Value |

+---------------------------+--------------------------------------+

| admin_state_up | UP |

| availability_zone_hints | |

| availability_zones | |

| created_at | 2019-06-22T12:17:37Z |

| description | |

| dns_domain | None |

| id | a91d6aaa-0e2f-426d-9d55-50c2eb04c420 |

| ipv4_address_scope | None |

| ipv6_address_scope | None |

| is_default | None |

| mtu | 1500 |

| name | external-net |

| port_security_enabled | True |

| project_id | 604257738d254dd6a0dafc91c962b6bf |

| provider:network_type | flat |

| provider:physical_network | external |

| provider:segmentation_id | None |

| qos_policy_id | None |

| revision_number | 4 |

| router:external | External |

| segments | None |

| shared | True |

| status | ACTIVE |

| subnets | |

| updated_at | 2019-06-22T12:17:37Z |

+---------------------------+--------------------------------------+

在external-net网络上创建一个external-sub子网,指定桥接DHCP分配起始IP地址,结束IP地址,dnsIP地址,网关地址,CIDR子网。

[root@vm1-controller1 ~]# openstack subnet create --network external-net \

--allocation-pool start=192.168.7.120,end=192.168.7.130 \

--dns-nameserver 202.106.0.20 --gateway 192.168.7.254 \

--subnet-range 192.168.0.0/21 external-sub